Strategies For Maximizing Network Performance

Motadata Team

What Is Network Performance Optimization?

Network performance optimization is the process of improving the speed, reliability, and efficiency of a network through a combination of monitoring, configuration management, and targeted improvements. It aims to deliver the best possible user experience and business outcomes by ensuring the network operates at peak capacity — identifying and resolving bottlenecks before they affect end users, allocating bandwidth effectively, and maintaining consistent service quality across all connected systems and applications.

Your Network Is Only as Strong as Your Ability to Monitor It

In a connected business environment, network performance isn't a background concern — it's the foundation everything runs on. Communication, data transfer, application delivery, cloud services, security operations — all of it depends on a network that performs reliably under load.

Yet many organizations treat network performance as something to react to rather than manage proactively. They wait for users to complain about slow applications, discover outages after they've already impacted operations, and troubleshoot with incomplete visibility into what's actually happening across their infrastructure.

The cost of this reactive approach is significant. According to industry research, the average cost of network downtime ranges from $5,600 to $9,000 per minute for enterprise organizations. Even brief performance degradations — increased latency, packet loss, jitter — compound into lost productivity, frustrated users, and degraded customer experiences.

The organizations that maintain high-performing networks aren't the ones with the most expensive hardware. They're the ones with the best visibility, the fastest detection, and the most disciplined optimization practices.

Why Network Monitoring Is Non-Negotiable for Performance

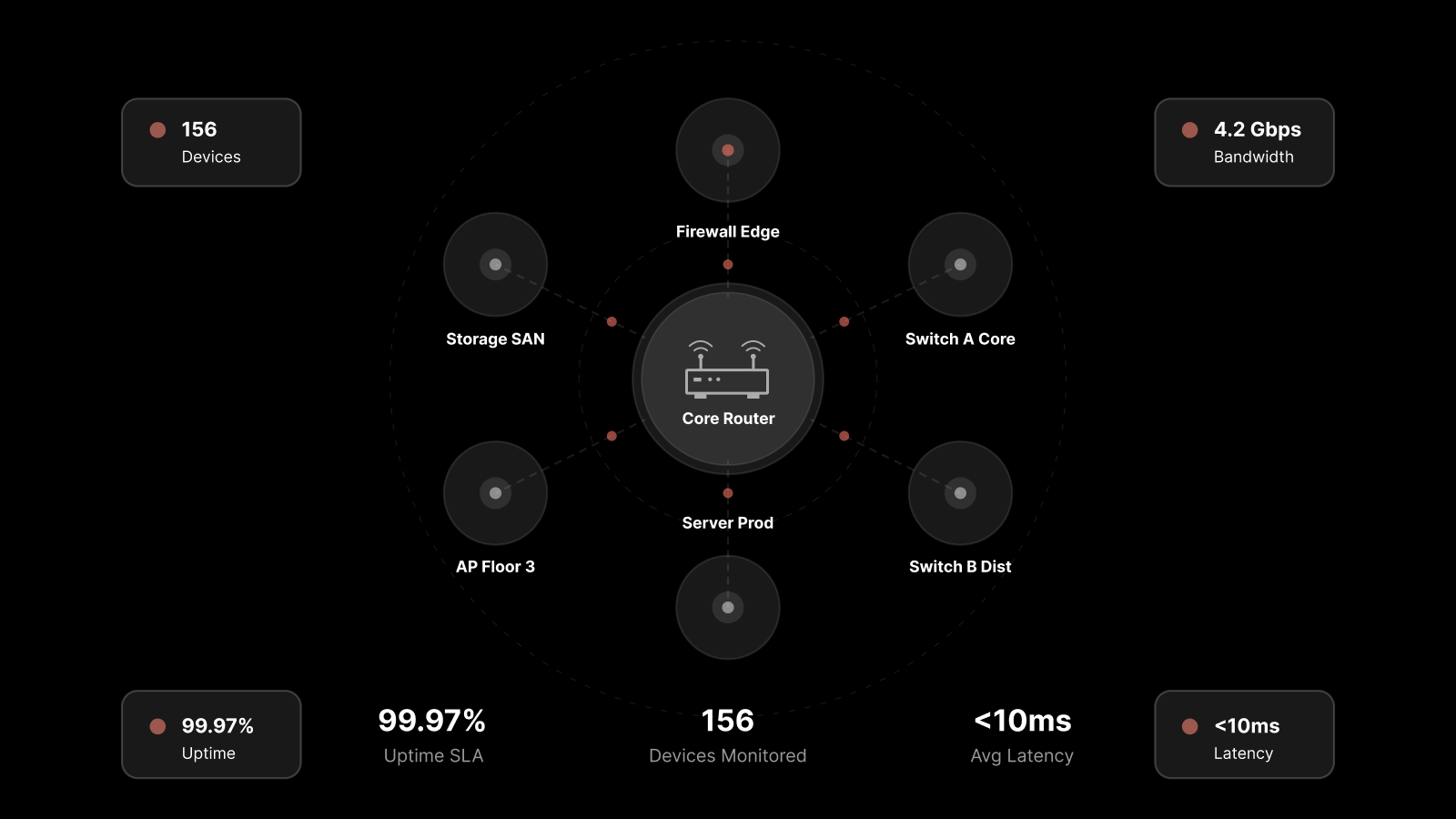

Network monitoring is the continuous process of observing network components — routers, switches, servers, firewalls, access points, and applications — to ensure they're operating correctly and performing within acceptable parameters. It's not just about watching dashboards. It's about building the visibility infrastructure that enables proactive management.

Here's what effective network monitoring delivers:

Proactive Issue Detection

Continuous monitoring catches problems early — before they escalate into service disruptions. By tracking performance metrics in real time across all network components, IT teams can detect congestion, hardware failures, configuration errors, and security anomalies while they're still small and containable.

Without proactive monitoring, issues are typically discovered when users report problems — by which point the impact has already spread.

Intelligent Resource Allocation

Network monitoring provides visibility into traffic patterns and resource usage across the infrastructure. This data drives informed decisions about capacity planning, bandwidth allocation, and infrastructure investment — ensuring resources go where they're needed most rather than being distributed based on assumptions.

Improved User Experience

By tracking performance indicators like latency, packet loss, and bandwidth utilization in real time, monitoring teams can identify and resolve degradations before users notice them. This directly translates to higher satisfaction scores, fewer support tickets, and more reliable service delivery.

Security Threat Identification

Network monitoring plays a direct role in security. Monitoring traffic patterns and behavioral anomalies helps detect unauthorized access attempts, malware activity, DDoS attacks, and data exfiltration before they cause significant damage.

Core Metrics for Network Performance

Not all metrics are equally useful. Focus your monitoring on the indicators that directly reflect network health and user experience:

Metric | What It Measures | Why It Matters |

|---|---|---|

Latency | Time for data to travel between two points | Directly affects application responsiveness and user experience |

Packet Loss | Percentage of data packets that don't reach their destination | Causes retransmissions, slows throughput, degrades voice/video quality |

Jitter | Variation in packet arrival times | Impacts real-time applications (VoIP, video conferencing) |

Throughput | Actual data transfer rate | Indicates whether bandwidth is being used effectively |

Bandwidth Utilization | Percentage of available bandwidth in use | Reveals congestion points and capacity constraints |

Error Rate | Frequency of transmission errors | Signals hardware issues, cable problems, or configuration errors |

Availability/Uptime | Percentage of time the network is operational | The most fundamental reliability indicator |

Establish baselines for each metric during normal operation. Deviations from baseline are your early warning system.

Strategies That Actually Improve Network Performance

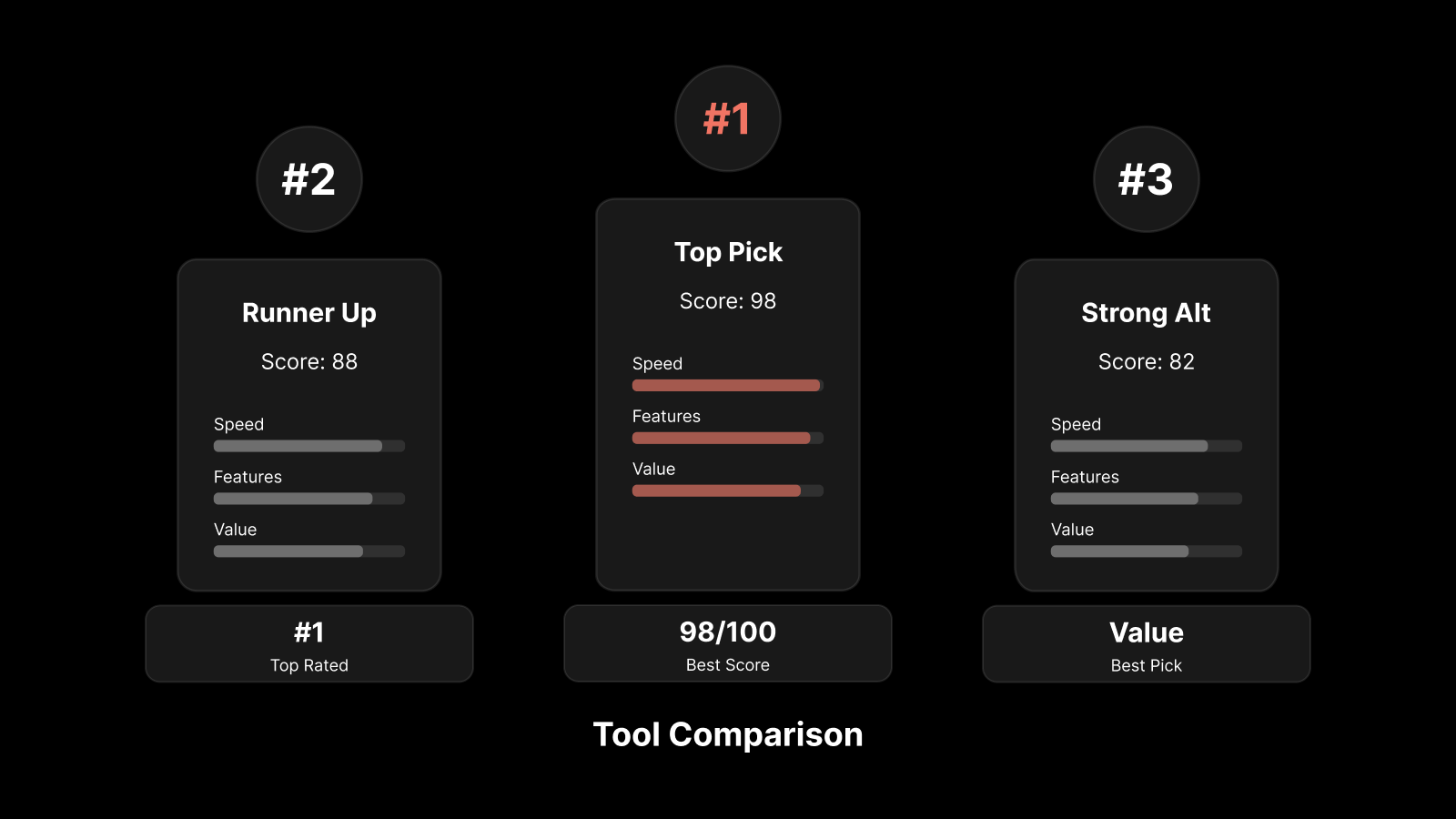

Implement a Network Performance Monitoring (NPM) Tool

A dedicated NPM tool is the foundation of any optimization strategy. It provides centralized visibility across your entire network infrastructure — physical devices, virtual machines, cloud resources, and applications — in a single console.

Look for NPM tools that offer:

Real-time performance dashboards with customizable views

Automated discovery of network devices and topology mapping

Threshold-based and anomaly-based alerting

Historical trend analysis for capacity planning

Multi-vendor support across your entire infrastructure stack

Without an NPM tool, you're managing your network blind. With one, you have the data needed to make every other optimization strategy effective.

Prioritize Bandwidth Management and Traffic Shaping

Not all network traffic is equal. Business-critical applications (ERP, CRM, VoIP, video conferencing) should receive priority over non-essential traffic (software updates, file backups, social media).

Effective bandwidth management involves:

Quality of Service (QoS) policies that prioritize traffic by application type, user group, or business criticality

Traffic shaping that controls data flow rates to prevent congestion during peak periods

Bandwidth allocation rules that reserve capacity for critical applications even during high-utilization periods

Without traffic prioritization, a large file transfer can degrade VoIP quality for the entire office. With it, critical applications maintain performance even under heavy load.

Build a Configuration Management Discipline

A surprising number of network performance issues trace back to configuration errors — a misapplied ACL, an incorrect VLAN assignment, or a routing table change that creates a suboptimal path.

Configuration management practices that prevent these problems:

Maintain a centralized repository of device configurations

Use change control processes for all configuration modifications

Automate configuration backups so you can roll back quickly when changes cause problems

Validate configurations against established standards before deployment

Configuration drift — gradual, undocumented changes that accumulate over time — is one of the most common causes of hard-to-diagnose performance degradation.

Design an Effective Alert Escalation Framework

Alerting is only useful if it reaches the right people at the right time with the right context. An effective escalation framework prevents both missed alerts and alert fatigue.

Key elements:

Tiered alerting — informational alerts for monitoring dashboards, warning alerts for on-call engineers, critical alerts for immediate response teams

Contextual notifications — include the affected device, metric, deviation severity, and suggested actions in every alert

Escalation timers — if a warning isn't acknowledged within a defined window, it escalates automatically

Alert suppression — during planned maintenance windows, suppress non-critical alerts to prevent noise

Alert fatigue is real. When everything is critical, nothing is. Tune your thresholds carefully and review them regularly.

Automate Common Network Operations

Repetitive manual tasks are slow, error-prone, and consume engineering time that could go toward more complex work. Automation addresses this directly:

Automated diagnostics — when specific conditions are detected, run predefined diagnostic scripts automatically

Self-healing actions — restart services, clear caches, or reroute traffic automatically when predefined conditions are met

Scheduled maintenance — automated configuration backups, firmware checks, and health reports

Capacity scaling — automatically adjust resource allocation based on real-time demand (especially relevant in cloud and hybrid environments)

Automation doesn't replace engineers — it frees them from repetitive work so they can focus on architecture, planning, and complex troubleshooting.

The Future: AI-Driven Network Performance Optimization

Traditional rule-based monitoring has clear limitations. Static thresholds can't account for the dynamic, variable nature of modern network traffic. AI and machine learning are changing how organizations approach network performance:

Anomaly detection — ML models learn normal behavior patterns and flag deviations that rule-based systems miss

Predictive analytics — AI identifies trends that indicate future problems, enabling pre-emptive action before performance degrades

Automated root cause analysis — AI correlates events across multiple systems to identify the actual source of a problem, not just its symptoms

Dynamic threshold adjustment — instead of static thresholds, AI continuously adjusts what "normal" looks like based on time of day, day of week, and seasonal patterns

These capabilities reduce mean time to detect (MTTD) and mean time to resolve (MTTR) significantly — and they reduce the alert noise that overwhelms operations teams.

Optimize Network Performance With Motadata

Motadata's AI-native network monitoring platform gives you complete visibility across physical, virtual, and cloud infrastructure from a single console. With machine learning-powered anomaly detection, automated root cause analysis, predictive analytics, and real-time performance dashboards, Motadata helps your team detect and resolve network issues before users notice them. The platform supports 10,000+ device types with auto-discovery and topology mapping, so you see your entire network — not just the parts you remembered to configure. Start your 30-day free trial or schedule a demo to see it in action.

Conclusion

Maximizing network performance requires more than good hardware and fast links. It requires continuous monitoring, disciplined configuration management, intelligent alerting, automated operations, and a clear focus on the metrics that actually reflect user experience.

The organizations that maintain the highest-performing networks treat optimization as an ongoing practice — not a project with a finish line. They invest in the right tools, measure what matters, automate the repetitive, and use AI to detect what humans can't see at scale.

Your network is the foundation of your digital operations. The better you monitor and optimize it, the more reliable everything built on top of it becomes.

FAQs

What's the most important network performance metric to monitor?

Latency is typically the most directly user-impacting metric, but no single metric tells the whole story. Monitor latency, packet loss, jitter, throughput, and bandwidth utilization together to get a complete picture of network health.

How often should network performance baselines be updated?

Review and update baselines quarterly, or whenever significant infrastructure changes occur (new applications, office expansions, cloud migrations). Baselines that don't reflect current reality produce false positives and missed issues.

What's the difference between network monitoring and network performance monitoring?

Network monitoring covers availability — is the device up or down? Network performance monitoring goes deeper, tracking how well the network is operating: speed, reliability, error rates, and user experience quality.

How does AI improve network performance monitoring?

AI detects anomalies that rule-based systems miss, predicts problems before they impact users, correlates events across systems for faster root cause analysis, and dynamically adjusts thresholds to reduce false alerts.

Author

Motadata Team

Content Team

Articles produced collaboratively by our engineering and editorial teams bear the collective authorship of Motadata Team.