Database Monitoring: What It Is, Key Metrics & Best Practices

Arpit Sharma

Database monitoring is the practice of tracking, measuring, and analyzing database performance, availability, security, and resource usage in real time to prevent downtime, optimize queries, and protect data integrity.

Key Takeaways

Database monitoring gives IT teams real-time visibility into performance, availability, and security across all database systems.

Tracking the right metrics -- response time, CPU usage, query performance, and availability -- helps you catch issues before users feel the impact.

Proactive monitoring with AI-driven alerts reduces mean time to detection and prevents unplanned outages.

Best practices include monitoring slow queries, planning capacity, automating backups, and documenting review processes.

A unified monitoring platform like Motadata consolidates database health data into a single view, eliminating tool sprawl.

Every minute of database downtime costs money. According to Gartner, the average cost of IT downtime runs approximately $5,600 per minute -- and databases sit at the center of nearly every business-critical application. When your database slows down or goes offline, everything downstream breaks: transactions fail, dashboards go dark, and customers leave.

That's why database monitoring isn't optional. It's the foundation of operational reliability.

This guide covers what database monitoring is, which metrics matter most, common problems it solves, and the best practices your team should follow to keep databases running at peak performance.

What Is Database Monitoring and Why Does It Matter?

Database monitoring is the continuous process of observing and measuring how your databases perform, how resources are consumed, whether security standards are met, and if compliance requirements are satisfied.

It matters for three reasons:

Early issue detection. Monitoring catches performance degradation, resource exhaustion, and security anomalies before they escalate into outages.

Resource optimization. By tracking CPU, memory, disk I/O, and network usage, teams can right-size infrastructure and avoid overprovisioning.

Data protection. Continuous security monitoring identifies unauthorized access attempts, configuration drift, and compliance violations in real time.

Without monitoring, database administrators (DBAs) operate blind. They react to incidents after users report them instead of preventing issues proactively.

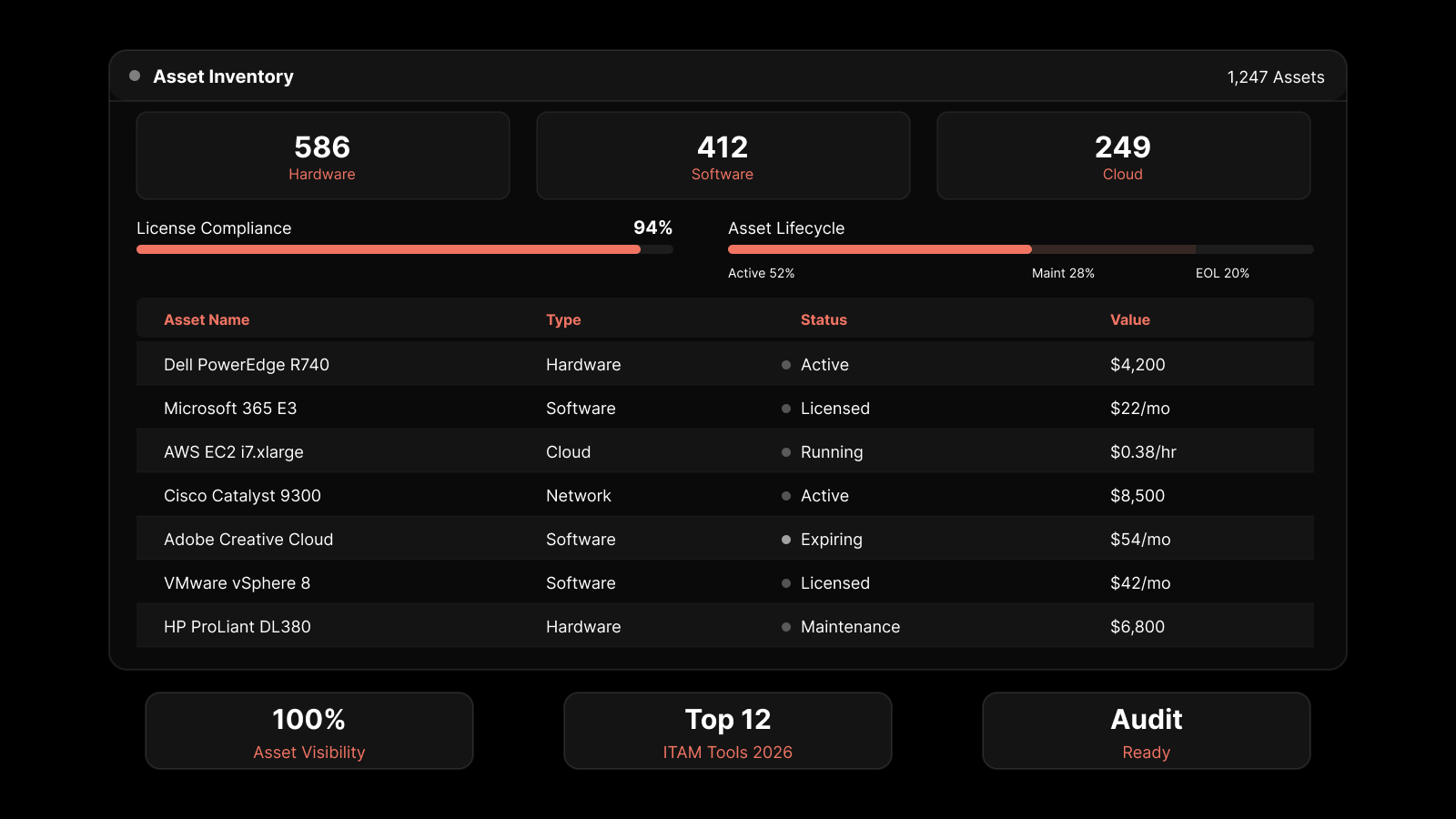

The Three Pillars of Database Monitoring

Database monitoring covers three core areas:

Performance monitoring tracks query execution times, throughput, connection counts, and resource utilization. It tells you whether your database responds fast enough and handles the workload efficiently.

Security monitoring watches for unauthorized access, failed login attempts, privilege escalations, and suspicious data transfers. It's your first line of defense against breaches and insider threats.

Compliance monitoring ensures your database operations meet regulatory requirements like GDPR, HIPAA, and SOC 2. It covers audit trails, encryption verification, and access controls.

Essential Database Metrics You Should Track

Not all metrics carry the same weight. Here are the ones that matter most for maintaining healthy databases:

1. Availability

Availability measures the percentage of time your database is operational and accessible. It's the most fundamental metric because everything else depends on it.

Track uptime/downtime ratios, failover events, and connection success rates. Set alerts for any availability drop below your SLA threshold -- even a brief outage during peak hours can have outsized business impact.

2. Response Time

Response time measures how long it takes for the database to process a query and return results. Slow response times directly affect application performance and user experience.

Monitor both average and percentile-based response times (P95, P99). Averages can hide outliers -- a P99 of 5 seconds means 1% of your users experience unacceptable delays.

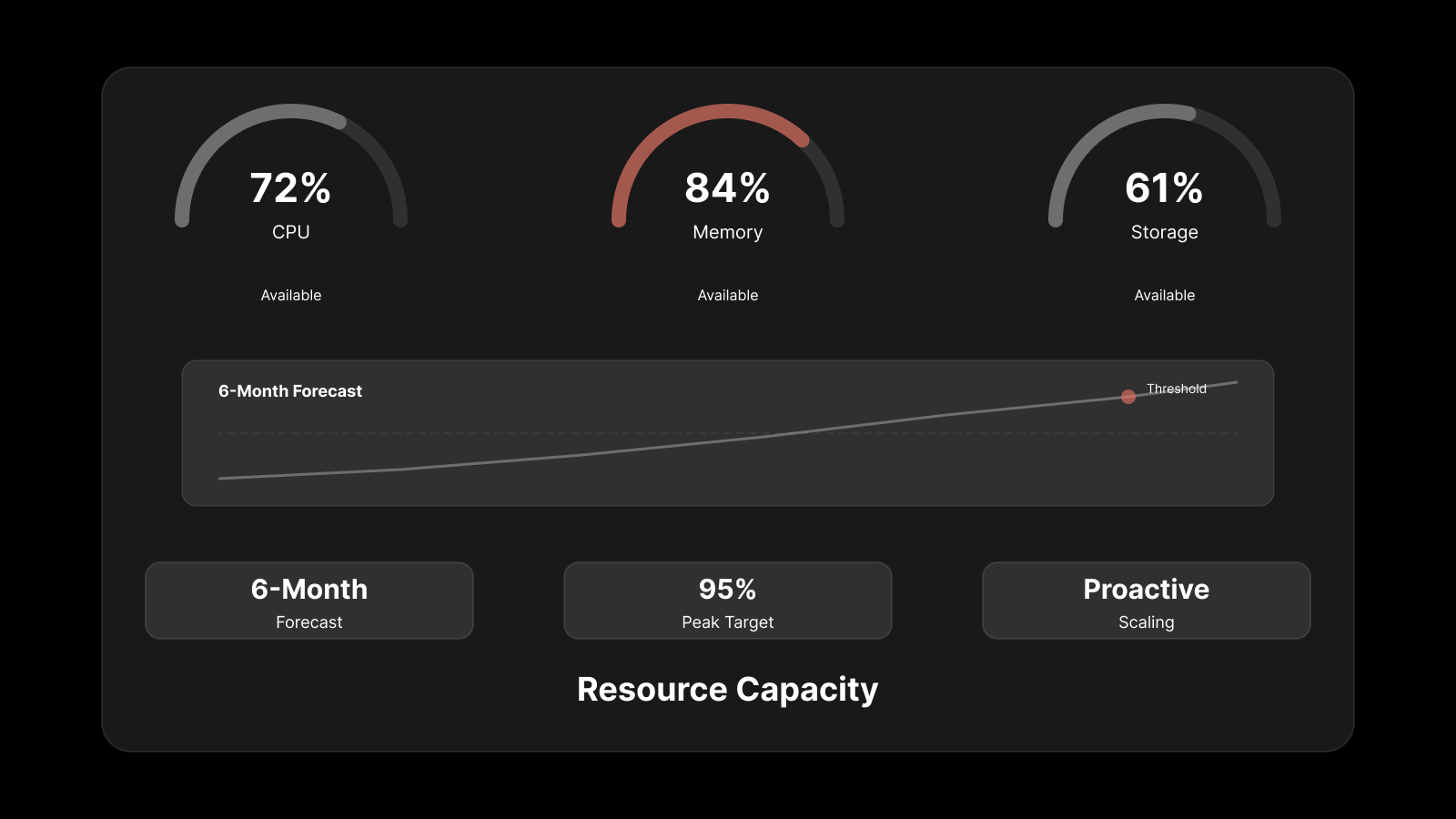

3. Resource Utilization

Keep a close eye on four resource dimensions:

CPU usage -- sustained high CPU often points to inefficient queries or inadequate compute capacity.

Memory usage -- insufficient memory forces the database to read from disk, which is orders of magnitude slower.

Disk I/O -- high disk queue lengths indicate storage bottlenecks.

Network bandwidth -- congestion affects replication, backup operations, and client connections.

4. Query Performance

Slow queries are the most common cause of database performance problems. Monitor:

Query execution time and frequency

Full table scans vs. index-based lookups

Lock wait times and deadlocks

Query plan changes

5. Throughput

Throughput measures how many transactions, queries, or operations your database processes per unit of time. It's a direct indicator of capacity utilization and helps you plan for growth.

6. Security Events

Track failed login attempts, privilege changes, new account creation, and access patterns to sensitive tables. Anomalies in these patterns often indicate an active threat or misconfiguration.

Common Problems Database Monitoring Solves

Performance Degradation

When applications slow down, the database is often the first suspect. Monitoring reveals whether the root cause is a bad query plan, resource contention, lock escalation, or infrastructure limitations. Without this data, troubleshooting becomes guesswork.

Resource Exhaustion

Databases consume CPU, memory, and storage at varying rates depending on workload. Monitoring tracks consumption trends so you can scale resources before hitting limits. Capacity planning based on historical data prevents the "emergency upgrade at 2 AM" scenario.

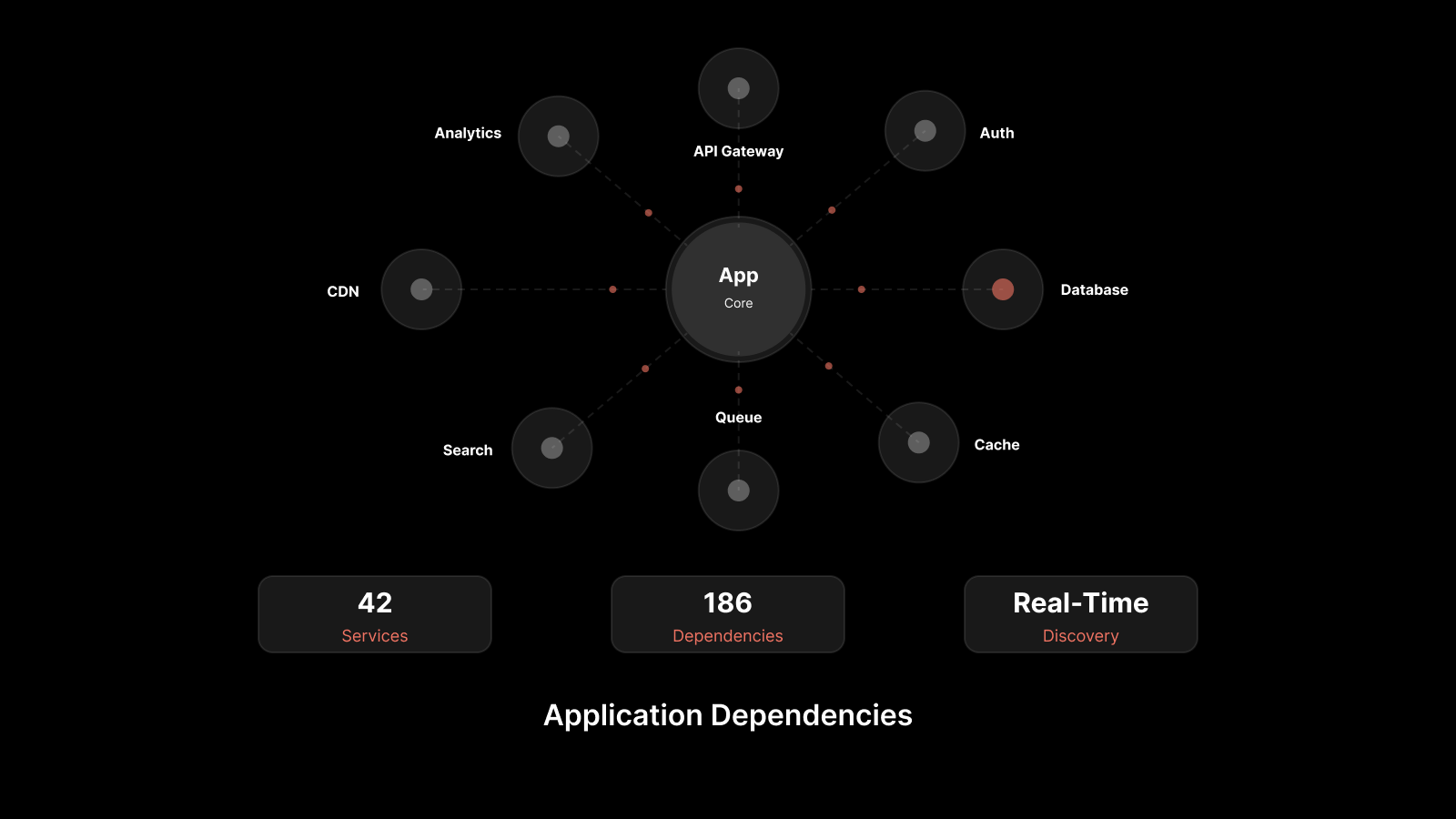

Anomaly Detection

Modern database monitoring tools use AI to establish baselines of normal behavior and alert you when something deviates. This catches issues that static thresholds miss -- like a gradual memory leak or a slowly growing table that will exhaust disk space in two weeks.

Security Incidents

Database breaches often start with subtle signs: an unusual query pattern, a login from an unexpected location, or a privilege escalation that nobody requested. Continuous security monitoring catches these signals early enough to contain the threat.

Database Monitoring Best Practices

1. Monitor Slow Queries Proactively

Don't wait for users to complain about application sluggishness. Use application performance monitoring (APM) alongside database monitoring to identify slow queries automatically.

Set thresholds for query execution time and investigate anything that exceeds them. Common fixes include adding indexes, rewriting queries, and restructuring table schemas.

2. Track Resource Consumption Trends

Point-in-time resource snapshots don't tell the full story. Track CPU, memory, and disk usage over days and weeks to identify trends. A database that uses 60% CPU today might hit 90% next month if workload growth continues at the same rate.

Trend data also helps justify infrastructure investments with actual numbers rather than estimates.

3. Plan Capacity Before You Need It

Capacity planning uses historical monitoring data to forecast future resource needs. By analyzing workload patterns, seasonal peaks, and growth rates, you can provision infrastructure ahead of demand.

This prevents both over-provisioning (wasted spend) and under-provisioning (performance issues during spikes).

4. Automate Backups and Verify Them

Backups are worthless if they fail silently. Monitor backup jobs to confirm they complete successfully, finish within expected timeframes, and produce restorable copies.

Set up alerts for backup failures and test restore procedures regularly. A backup you've never tested is a backup you can't trust.

5. Use Monitoring Tools with Built-In Automation

Manual monitoring doesn't scale. Use tools that provide real-time dashboards, automated alerting, and AI-driven anomaly detection.

The right monitoring platform should:

Auto-discover database instances across your environment

Provide unified dashboards for all database types

Correlate database metrics with application and infrastructure data

Send intelligent alerts that reduce noise

6. Document Your Monitoring Procedures

Write down what you monitor, how you respond to alerts, and who handles escalations. Documentation ensures consistency across shifts and team members.

It also satisfies compliance requirements -- auditors want evidence that you have systematic monitoring processes in place, not ad hoc practices.

People Also Ask

What is the difference between database monitoring and database observability?

Database monitoring tracks predefined metrics and alerts on threshold violations. Database observability goes further -- it provides the ability to explore and understand database behavior using metrics, logs, and traces together. Observability answers "why" something happened, while monitoring tells you "what" happened.

How often should you monitor database performance?

Critical databases should be monitored continuously in real time. At a minimum, collect metrics at 30-60 second intervals for production databases. Less critical systems can use 5-minute intervals. Batch processing databases should be monitored during job execution windows.

Can you monitor databases in the cloud the same way as on-premises?

The principles are the same, but the implementation differs. Cloud databases (RDS, Azure SQL, Cloud SQL) expose metrics through provider APIs and services like CloudWatch or Azure Monitor. A unified monitoring platform that supports both cloud and on-premises databases gives you consistent visibility across hybrid environments.

What's the most important database metric to track?

There's no single answer because it depends on your priorities. For user-facing applications, response time matters most. For data pipelines, throughput is the priority. For regulated industries, security and compliance metrics take precedence. The best approach is to track a balanced set of metrics across all categories.

Monitor Your Databases with Motadata

Motadata provides AI-native database monitoring that gives your team complete visibility into database health, performance, and security from a single platform.

With Motadata, you can:

Track performance metrics in real time -- response time, CPU, memory, query details, and events across all your database instances.

Detect anomalies with AI -- Motadata's machine learning engine establishes baselines automatically and alerts you to deviations before they become outages.

Understand query impact -- see how database queries affect service availability without adding extra instrumentation.

Visualize everything in one dashboard -- customizable dashboards show performance trends, active alerts, and resource utilization at a glance.

Metric | What Motadata Tracks |

|---|---|

Response Time | Duration between query submission and database response |

Memory Usage | System memory consumed by the database engine |

CPU Usage | Processor capacity used by database operations |

Query Details | Execution plans, duration, and optimization status |

Events | User actions, system notifications, and operational patterns |

Errors | Database errors, anomalies, and their root causes |

Stop reacting to database problems after they impact users. Start monitoring proactively with Motadata.

Request a Demo | Explore Database Monitoring

FAQs

What is database monitoring, and why is it important?

Database monitoring is the practice of continuously tracking database performance, availability, security, and resource usage. It's important because it helps IT teams detect issues early, optimize resource allocation, protect sensitive data, and maintain SLA compliance. Without it, teams react to problems after users are already affected.

What are the key metrics to monitor in a database?

The most important metrics include response time, CPU and memory usage, query execution performance, availability (uptime), throughput (transactions per second), disk I/O, and security events like failed logins. Together, these metrics give you a complete picture of database health.

How can database monitoring tools improve performance?

Monitoring tools provide real-time visibility into query execution, resource utilization, and system bottlenecks. By identifying slow queries, resource contention, and capacity limits early, DBAs can tune queries, adjust configurations, and scale resources before performance degrades noticeably.

What are the common challenges in database monitoring?

Key challenges include scaling monitoring as databases grow, dealing with alert noise from too many false positives, monitoring across hybrid (cloud + on-prem) environments, and correlating database issues with application-level impacts. Modern AI-driven tools address these challenges through automated baselining and intelligent alert filtering.

How does database monitoring contribute to security and compliance?

Database monitoring tracks access patterns, failed login attempts, privilege changes, and data transfers. This visibility helps detect unauthorized access, identify potential breaches early, and maintain audit trails required by regulations like GDPR, HIPAA, and SOC 2.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.