What Is OpenTelemetry? Architecture, Components & How to Get Started

Motadata Team

OpenTelemetry is an open-source observability framework that provides standardized APIs, SDKs, and tools for generating, collecting, and exporting telemetry data -- traces, metrics, and logs -- from cloud-native applications, without locking you into any single vendor.

Your microservices architecture generates millions of spans per minute. Your metrics pipeline feeds three different backends. Your logging stack was configured by someone who left the company two years ago. Nothing correlates. When a production incident hits, your team spends the first 30 minutes figuring out which tool has the right data -- not actually debugging.

That fragmentation is the problem OpenTelemetry solves. Instead of maintaining separate instrumentation for every observability backend, you instrument once with OpenTelemetry and send data wherever you need it. It's the reason the Cloud Native Computing Foundation (CNCF) ranks it as the second most active project after Kubernetes.

What Is OpenTelemetry and How Does It Work?

OpenTelemetry is an open-source observability framework made up of APIs, SDKs, and tools that IT teams use to generate, collect, and export telemetry data. It covers the three core observability signals -- traces, metrics, and logs - and provides a consistent way to instrument applications regardless of language or deployment environment.

Here's how the data lifecycle works:

Instrumentation: APIs define what telemetry to capture. You either add manual instrumentation calls in your code or use auto-instrumentation libraries for common frameworks.

Collection: SDKs aggregate the generated data and prepare it for export, adding context like service name, deployment environment, and trace IDs.

Processing: The OpenTelemetry Collector receives data from multiple sources, applies filtering, sampling, and enrichment, then batches it for export.

Export: Processed telemetry gets sent to your backend of choice -- Jaeger, Prometheus, Grafana, or any OTLP-compatible destination.

The key distinction: OpenTelemetry handles instrumentation and transport. It doesn't store data, build dashboards, or fire alerts. That's your backend's job. This separation is what makes it vendor-neutral.

Why OpenTelemetry Matters for Modern Observability

OpenTelemetry addresses a fundamental problem in today's IT environment: observability fragmentation. Here's why it matters.

It standardizes data collection. Before OpenTelemetry, every observability vendor required its own agent, its own SDK, its own data format. Switching backends meant re-instrumenting your entire stack. OpenTelemetry eliminates that by providing one instrumentation layer that works with any backend.

It eliminates vendor lock-in. Your telemetry data flows through a standardized pipeline. Want to move from one backend to another? Change the exporter configuration. Your instrumentation stays the same. This gives decision-makers the freedom to choose tools based on capability and cost, not switching penalties.

It reduces overhead. Instead of running three separate agents for traces, metrics, and logs, you run one OpenTelemetry Collector. This cuts resource consumption, simplifies deployment, and gives your team one system to maintain instead of three.

It's becoming the industry standard. With backing from Google, Microsoft, AWS, and the CNCF, OpenTelemetry isn't a niche project. It's the default instrumentation layer for cloud-native applications. Teams that adopt it now won't need expensive re-instrumentation when the ecosystem fully converges on it.

It enables faster incident resolution. By providing correlated traces, metrics, and logs through semantic conventions, OpenTelemetry makes it straightforward to follow a request from ingress to database query and pinpoint exactly where latency or errors occur.

Core Components of OpenTelemetry

OpenTelemetry's architecture breaks down into four primary components that work together to move telemetry data from your applications to your analysis backends.

The OpenTelemetry Collector

The Collector is the central processing hub. It's a vendor-neutral proxy that receives telemetry data, processes it, and exports it to one or more destinations. You can run it as an agent alongside your application or as a standalone gateway.

The Collector supports multiple data formats on input - OTLP, Prometheus, Jaeger, Zipkin - and can export to any compatible backend. It handles batching, retry logic, sampling, and data enrichment, which means your applications don't need to.

Language SDKs

SDKs provide the language-specific implementation of the OpenTelemetry API. They're available for Java, Python, Go, JavaScript/Node.js, .NET, Ruby, and more. Each SDK handles span creation, metric recording, context propagation, and data export for its respective language.

The SDK is where configuration happens: sampling rates, resource attributes, export endpoints, and batch sizes all get set here.

Auto-Instrumentation Libraries

For popular frameworks and libraries - HTTP servers, database drivers, messaging clients - OpenTelemetry provides auto-instrumentation packages. These generate telemetry data without requiring code changes.

In Python, you can instrument a Flask application by adding a single package. In Java, a javaagent attachment instruments your entire application at startup. The trade-off: auto-instrumentation covers common patterns but won't capture business-specific telemetry. For that, you'll need manual instrumentation alongside it.

Exporters

Exporters are the bridge between the OpenTelemetry SDK and your backend. They translate OpenTelemetry's internal data format into whatever your destination expects. Switching backends is as simple as swapping the exporter configuration -- your instrumentation code stays untouched.

OpenTelemetry vs OpenTracing vs OpenCensus

OpenTelemetry didn't appear from nothing. It's the result of merging two earlier CNCF projects that were solving the same problem from different angles.

Aspect | OpenTracing | OpenCensus | OpenTelemetry |

|---|---|---|---|

Origin | CNCF (2016) | Google (2018) | Merger of both (2019) |

Scope | Distributed tracing only | Tracing + metrics | Tracing + metrics + logs |

Type | API specification (no implementation) | Libraries with built-in exporters | Full framework (APIs + SDKs + Collector) |

Status | Archived | Maintenance only | Active development |

Auto-instrumentation | Limited | Moderate | Extensive |

Collector | None | Limited | Full-featured, vendor-neutral |

The merger consolidated the community around a single standard. If you're currently using OpenTracing or OpenCensus, migration paths exist, and the OpenTelemetry documentation provides specific guides for each.

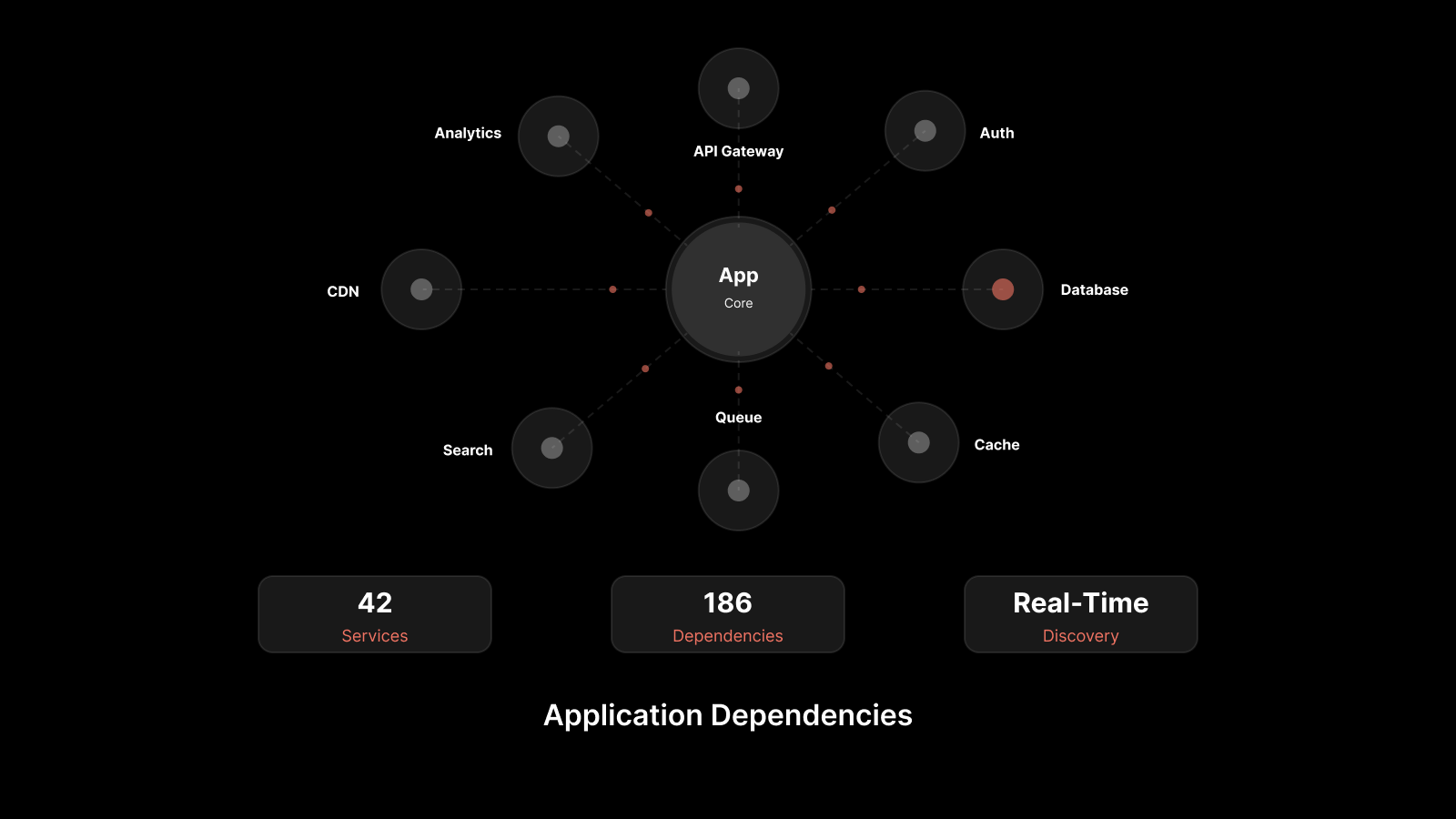

OpenTelemetry Architecture: How the Pieces Fit Together

The architecture follows a clear data flow:

Application Layer: Your services produce telemetry using OpenTelemetry APIs and SDKs. Auto-instrumentation libraries handle common frameworks. Manual instrumentation covers business-specific logic.

Transport Layer: The OpenTelemetry Protocol (OTLP) defines a standardized wire format for encoding traces, metrics, and logs. OTLP supports both gRPC and HTTP/protobuf transport, ensuring consistent data encoding across all components.

Processing Layer: The Collector receives OTLP data (or other formats via receivers), runs it through configurable pipelines of processors (filtering, sampling, enrichment, batching), and sends it to one or more exporters.

Backend Layer: Your observability platform - whether it's Prometheus for metrics, Jaeger for traces, or a unified platform like Motadata - receives the processed data for storage, querying, and visualization.

This layered approach means you can change any component without affecting the others. Swap your backend without touching instrumentation. Add a new language without reconfiguring the Collector. Scale the Collector independently of your applications.

Getting Started with OpenTelemetry

Adopting OpenTelemetry doesn't require a big-bang migration. Here's a practical path.

Step 1: Pick Your First Service

Start with a service that's well-understood and actively causing debugging pain. A high-traffic API gateway or a service with known latency issues makes a good candidate.

Step 2: Add Auto-Instrumentation

Install the OpenTelemetry SDK and auto-instrumentation libraries for your language. For Python, that's opentelemetry-distro and opentelemetry-instrumentation. For Java, it's the OpenTelemetry javaagent. This alone will produce traces for HTTP requests, database calls, and messaging operations.

Step 3: Deploy the Collector

Run the OpenTelemetry Collector as a sidecar or standalone service. Configure it to receive OTLP data from your instrumented service and export to your current backend. The Collector handles batching, retry, and back-pressure so your application doesn't have to.

Step 4: Add Manual Instrumentation

Once auto-instrumentation is producing data, add manual spans and metrics for business-specific operations -- payment processing steps, search query execution, or workflow state transitions. This is where observability goes from "I can see HTTP requests" to "I can see why this checkout failed."

Step 5: Expand and Optimize

Roll out to additional services. Configure sampling strategies to manage data volume. Set up the Collector's processing pipeline to filter noise, enrich data with infrastructure metadata, and route different signal types to different backends as needed.

Challenges and How to Address Them

Challenge | Impact | Solution |

|---|---|---|

Learning curve for SDK configuration | Slows initial adoption | Start with auto-instrumentation; add manual spans incrementally |

Data volume at scale | Storage costs, processing overhead | Implement tail-based sampling in the Collector |

Inconsistent instrumentation across teams | Fragmented observability | Define organization-wide semantic conventions and naming standards |

Performance overhead concerns | Latency-sensitive services hesitate | OpenTelemetry's overhead is typically under 2% -- benchmark first, then decide |

Migration from legacy instrumentation | Dual-running increases complexity | Run OTel alongside existing agents, migrate service by service |

Keeping up with fast-moving releases | API changes, new features | Pin SDK versions, follow the OTel changelog, upgrade quarterly |

What IT Teams Should Also Know

Does OpenTelemetry replace my APM tool?

No. OpenTelemetry replaces the instrumentation layer -- the agents and SDKs that generate and collect data. Your APM tool still handles storage, visualization, alerting, and analysis. Think of OpenTelemetry as the data pipeline and your APM as the data consumer.

How does OpenTelemetry relate to observability platforms?

OpenTelemetry feeds data into observability platforms. It's the instrumentation and transport standard. Platforms like Motadata, Grafana, and Datadog consume that data for analysis. Many platforms now accept OTLP natively, making integration straightforward.

What about performance overhead?

OpenTelemetry is designed for production use. The overhead is typically 1-3% CPU and minimal memory impact. The Collector's batching and sampling capabilities let you control the trade-off between data completeness and resource consumption.

Can I use OpenTelemetry with Kubernetes?

Absolutely. The OpenTelemetry Operator for Kubernetes automates SDK injection and Collector deployment. It manages auto-instrumentation annotations, Collector scaling, and sidecar injection, making Kubernetes the most streamlined environment for running OpenTelemetry.

How Motadata Delivers OpenTelemetry-Powered Observability

Motadata integrates natively with OpenTelemetry, ingesting OTLP traces, metrics, and logs into its AI-native observability platform. The platform correlates telemetry signals with infrastructure data, applies machine learning for anomaly detection, and surfaces actionable insights -- cutting MTTR and giving your team a single pane of glass across your entire stack.

If you're adopting OpenTelemetry, Motadata gives you the backend that makes that telemetry actionable.

Request a demo to see how Motadata transforms OpenTelemetry data into operational intelligence.

FAQs

What types of telemetry data does OpenTelemetry collect?

OpenTelemetry collects three signal types: traces (the path of a request through distributed services), metrics (numerical measurements like latency, error rates, and throughput), and logs (timestamped records of events). Together, these signals provide the foundation for full-stack observability.

How does OpenTelemetry handle security and data privacy?

OpenTelemetry supports TLS encryption for data in transit, configurable authentication for Collector endpoints, and data scrubbing processors that can redact sensitive fields before export. You control what data gets collected and where it goes.

What's the difference between OpenTelemetry and Prometheus?

Prometheus is a metrics collection and alerting system with its own scrape-based data model. OpenTelemetry is a broader framework covering traces, metrics, and logs with a push-based model. They're complementary -- OpenTelemetry can export metrics in Prometheus format, and many teams use both together.

How long does it take to implement OpenTelemetry?

For a single service with auto-instrumentation, you can be producing traces in under an hour. A full rollout across a microservices architecture typically takes weeks to months, depending on the number of services, languages involved, and the depth of manual instrumentation you want.

Does OpenTelemetry work with serverless and edge computing?

Yes. OpenTelemetry SDKs work in AWS Lambda, Azure Functions, Google Cloud Functions, and other serverless environments. The main consideration is cold start impact and the need for lightweight exporters. The community provides specific guidance for serverless instrumentation patterns.

Author

Motadata Team

Content Team

Articles produced collaboratively by our engineering and editorial teams bear the collective authorship of Motadata Team.