Top Server Performance Monitoring Strategies for 2025

Amartya Gupta

What is Server Monitoring

Server Monitoring is referred to as consistent monitoring of all network infrastructure, related to servers to analyses their resource utilization trends & later optimize it for a smooth end-user experience.

The concept of server monitoring is straight forward, it is the collection of data from servers & real time or historical data analysis to make sure that the network servers are free from impending issues are performing optimally thereby fulfilling their intended function.

In this blog, we will be answering top 3 questions around Server Monitoring while covering the Server Monitoring Best Practices, which are as follows:

- How important is server monitoring for your organization?

- What are some service monitoring best practices that you can implement?

- Some of the key highlights of Motadata’s solution that help in measuring, monitoring, managing and controlling resources of servers in an organization?

But before we delve deeper into each section, you must keep in mind that monitoring solutions need to handle high volume while presenting information in real time, and manage servers and proactively alert teams about trouble before downtime occurs.

How Important is Server Monitoring for Your Organization?

As an organization’s IT infrastructure expands, the strategy behind server monitoring becomes more & more complex and dispersed.

Server data gets generated in large quantity and there is a dire need for automation to analyses this data quickly.

Here server monitoring automation with AI helps IT managers and leaders to crunch large amounts of data in less time and come up with insight using less resources, thus adding value to the business in the form of better service delivery.

An organization having effective monitoring can minimize downtime and prevent revenue loss in the form of lost opportunities, lost productivity, and SLA penalties.

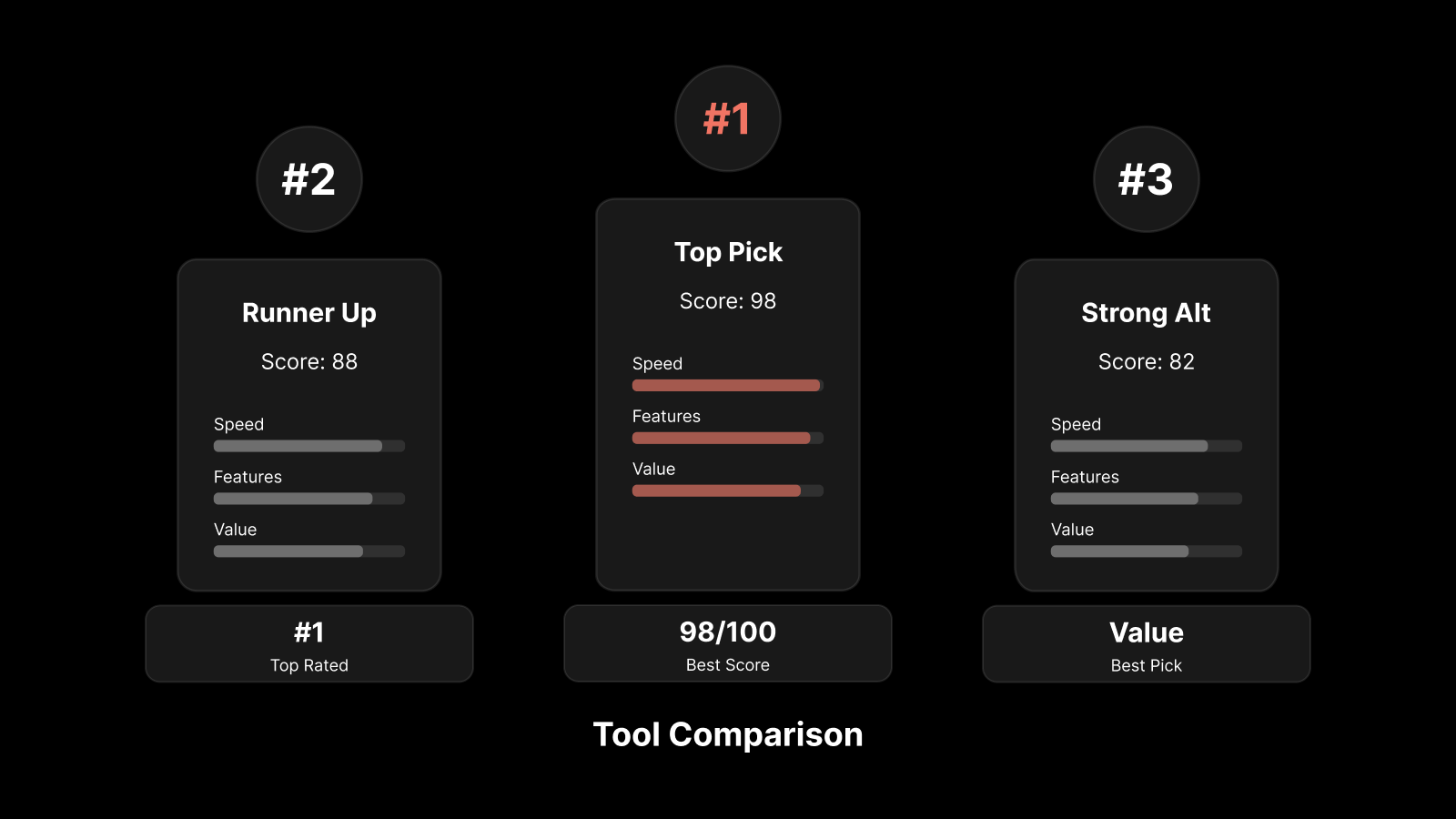

It is important to choose a monitoring tool that allows the organization to establish effective monitoring practices, and in doing so, the ROI is generated in the form of better quality of services with existing IT resources.

What are Some Service Monitoring Best Practices that You can Implement?

The main takeaway here is that a well-thought-out monitoring plan, using server monitoring best practices, if implemented with modern monitoring solution that is comprehensive can save an organization from major issues.

Here are some of those best practices:

1. Define the Normal

Drawing the baseline for defining acceptable server behavior is the starting point of creating an effective server performance monitoring strategy. Creating a baseline essentially involves defining what is normal behavior for the servers.

Deviations from the normal patterns indicate signs of trouble. So in the event of any such deviation IT administrators can isolate the problem and take the necessary actions.

Another benefit of this practice of defining baseline is that it provides early indicators that servers are pushing near the available capacity.

This information is important for managers to plan for upgrades as well as growth.

2. Monitor Core Usage on the Server

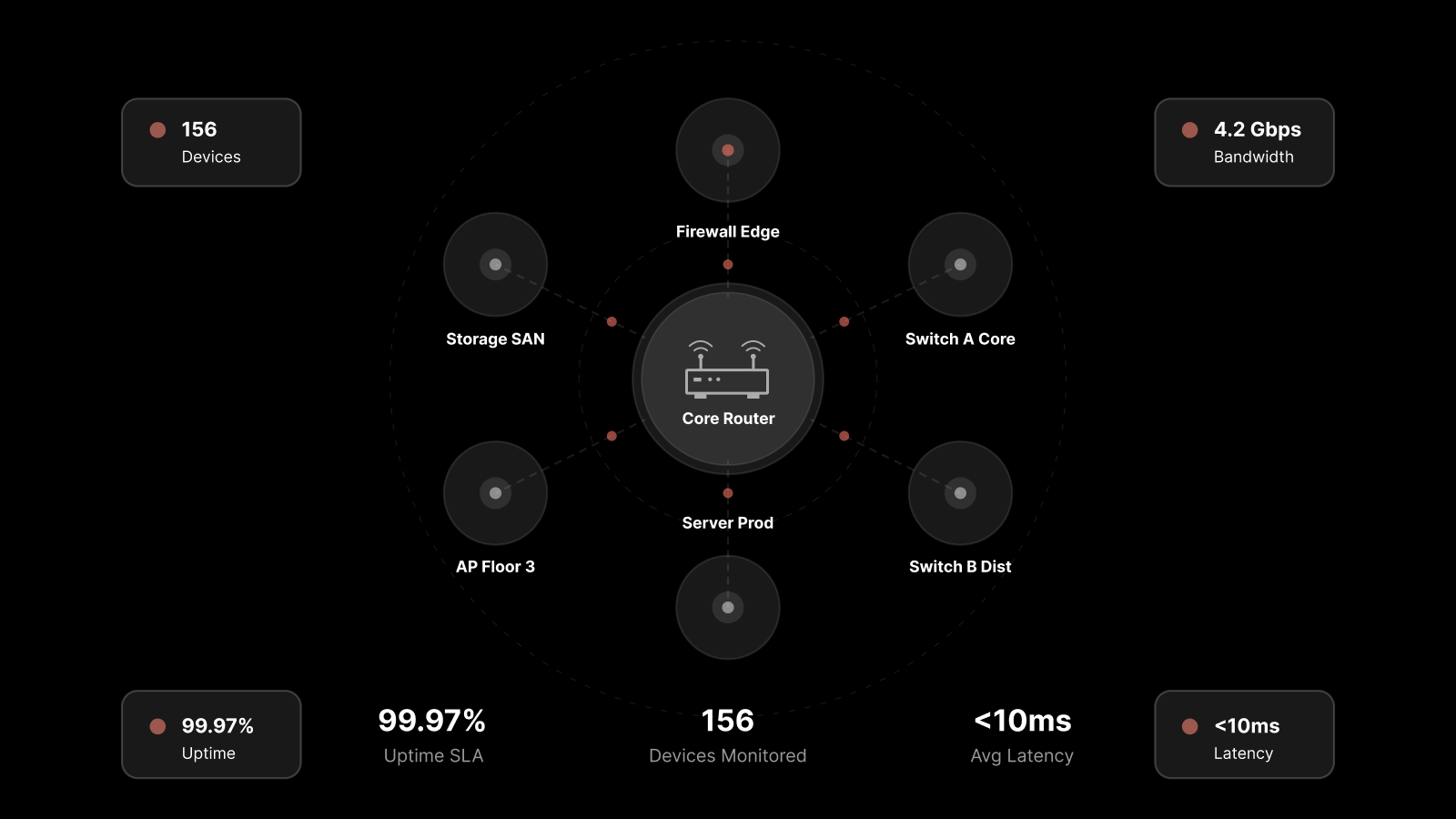

Core Monitoring for servers involves collecting CPU usage, drive space, memory usage, and bandwidth.

This is one of the most rewarding practices, which can help network admins to maintain high performance IT infrastructure for their organization.

Core monitoring allows IT leaders/administrators/technicians to have clear views of server performance every minute.

As a result, it is possible to get early warning signs and detect potential issues that might wreck the performance of the servers.

Apart from visualization of performance parameters, core monitoring enables fast detection of server offenses and protocol failures.

3. Define escalation matrix

Most enterprises spend huge amounts on sophisticated server performance monitoring software, but still, end up fire-fighting serious server issues.

These tools work best as early alarms to potential problems, and even help you identify the exact issue.

However, having done that, someone responsible must take the required action.

An escalation matrix defines whom to contact for which problems. It typically involves the IT staff and third-part vendors or contractors if any.

Having an escalation matrix helps in reducing the gap between knowing there is a problem and resolving it promptly.

It ensures that issues are looked and resolved on time. This prevents a small, known issue from growing into an enterprise-wide problem.

4. Generate & Monitor Regular Reports

Receiving notifications when your monitoring tool identifies off the mark server performance is necessary.

However, when you set out to strategize server performance monitoring, you should incorporate practices that notify you of normal functioning as well.

You might wonder – why should you look for reports when everything is working fine?

The reason is while you are busy with your priority IT tasks, it is easy to miss out on the need to modify monitoring parameters to meet the changing requirements.

When you configure one or more reports to deliver to your inbox, say, on a weekly basis, it is a great way to stay reminded of the recent results of server performance and to spot trends.

5. Perform Configuration Management

Utilizing profile-based configuration for your server configuration management can save you lots of time and troubles.

In a corporate IT infrastructure, each system has roles assigned at an individual level. However, the systems themselves have shared properties amongst them. You can use this to your advantage.

To do so, create multiple authentication profiles based on the role requirements.

Now, if there are any changes needed, all you must do is to update the required profile. As the systems have shared properties, your monitoring will pick up the changes automatically.

Profile-based configuration management is adopted for server monitoring practice in several enterprises across the world to help minimize repetitive tasks and create a high-performance IT architecture.

6. Ensure high availability through Failover

Even after you have architected your systems for robust performance, it is still a possibility that your network will hit downtime.

And when this happens, it is likely that your monitoring tool fails too and becomes unavailable for analysis. After all, the monitoring tool is also a part of your network.

This makes high availability one of the most important components of an effective server performance monitoring strategy for an enterprise with few to several servers.

When you utilize high availability through failover, what essentially happens is that your monitoring system does not have a single point of failure.

Therefore, in case the network, on which you have the monitoring tool, experiences a downtime, the monitoring system will still be available for providing data.

7. Maintain historical context

It seems counterintuitive to keep a historical context for server performance monitoring.

You might wonder, why you would maintain a context of issues that have occurred in the past and have been resolved long ago!

Well, if you do not learn from the past, you are likely to repeat it.

Therefore, maintaining historical context should be a part of your server performance monitoring strategy.

The historical context of what problems have occurred in a particular point of time and under specific conditions can give you valuable insights. Analyzing these findings can help you mitigate future annoyances and facilitate server capacity planning.

These best practices cover essential components and serve as a guideline for implementing a good server monitoring strategy.

What are Motadata key solution highlights that help in measuring, monitoring, managing and controlling resources of servers in your organization?

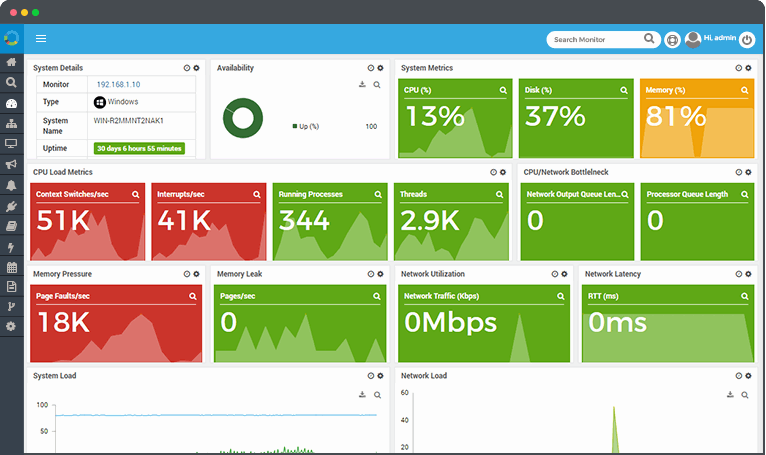

Motadata’s Server monitoring solution helps you manage and monitor the IT infrastructure.

It serves as an ideal server monitoring tool which provides real-time analysis of server performance irrespective of their environments be it physical, virtual or cloud.

The tool helps server administrators in detecting & resolving server performance or availability related issues quickly before they become a greater threat to the organization.

Monitor the following servers with Motadata:

- Database Server

- Application Server

- Virtual Server

- Web-Server

- Mailing Server

- Files Server

- Hardware

Motadata’s server monitoring tool is the most preferred server performance monitoring software for 1000s of worldwide IT professionals.

While most of the other tools give performance stats of the server’s performance.

Motadata server performance monitoring tool lets their users to achieve deep level visibility into server performance along with robust monitoring, Intelligent alerting as well as analytical capabilities.

Motadata’s intuitive dashboard, seamless integrations, ease of use, and advanced alerts makes Motadata one of the best server monitoring tools available in the market today.

If you want to identify any changes in the server behavior and generate reports instantly, then sign up for the free trial today!