Linux Server Monitoring: Common Issues, Key Metrics, and How to Fix Them

Amartya Gupta

What Is Linux Server Monitoring?

Linux server monitoring is the continuous process of collecting, analyzing, and alerting on performance metrics from Linux-based servers — including CPU utilization, memory usage, disk I/O, network throughput, system load, and application logs. It gives system administrators and IT operations teams the visibility they need to prevent outages, troubleshoot performance issues, and plan capacity before resources run out.

Whether you're running five servers or five thousand, Linux monitoring is the difference between catching a problem at 70% disk usage and discovering it at 100% when the server crashes.

It's 2 AM and your monitoring dashboard is green. Everything looks fine. Except an application team just reported that their production database is crawling. You SSH into the Linux server, run

top, and see CPU at 12%. Memory looks fine. Disk usage is at 55%. Nothing obvious. But when you checkiostat, you find disk I/O wait at 40% — a storage bottleneck that your monitoring tool never tracked because nobody configured it to watch I/O wait. This is the gap between having a monitoring tool and having effective Linux server monitoring.

Key Takeaways

Linux server monitoring must go beyond basic CPU and memory — disk I/O, network throughput, system load, and syslog events are equally important for catching real issues.

CLI tools (top, iostat, vmstat, netstat) are useful for live troubleshooting, but they don't replace a dedicated monitoring platform that tracks history, sets alerts, and correlates events.

The most common Linux monitoring issues aren't tool failures — they're configuration gaps: missing metrics, wrong thresholds, and no correlation between events.

Proactive monitoring with threshold-based alerts can prevent 60-70% of unplanned Linux server outages.

Syslog monitoring provides an early warning system for security events, application errors, and system-level anomalies that metric-based monitoring misses.

Container and cloud-hosted Linux instances require the same depth of monitoring as bare-metal servers — don't assume your cloud provider handles it.

Why Linux Server Monitoring Matters More Than Ever

Linux runs the backbone of modern IT. It powers the majority of web servers, database servers, container hosts, cloud instances, and application infrastructure worldwide. If you're in IT operations, you're monitoring Linux whether you realize it or not.

But the complexity of Linux environments has grown significantly:

Hybrid deployments. Linux servers now span bare-metal data centers, VMware clusters, AWS EC2 instances, Azure VMs, and Kubernetes nodes — often simultaneously.

Microservices architecture. A single application might run across dozens of Linux containers, each with its own resource profile.

Security exposure. Linux servers handling production workloads are high-value targets. Unauthorized access attempts, privilege escalation, and configuration tampering must be detected quickly.

Scale. Even mid-size organizations now run hundreds of Linux servers. Manual monitoring doesn't work at that scale.

A system admin who's checking servers manually with SSH and command-line tools is already behind. You need automated, continuous monitoring that tracks every relevant metric, alerts on anomalies, and provides the historical context needed for root cause analysis.

Critical Linux Server Monitoring Metrics: What to Track and Why

Not all metrics are created equal. Here are the ones that matter most, with thresholds and troubleshooting context.

CPU Utilization and CPU Steal Time

What it measures: The percentage of CPU time spent on user processes, system processes, I/O wait, and idle.

Why it matters: Sustained high CPU usage causes application slowdowns, request timeouts, and process queuing. But raw CPU percentage isn't enough — you need to see the breakdown.

Key sub-metrics:

User % — CPU spent on application workloads. High user % with good performance is fine; high user % with degraded performance means the application needs optimization or more CPU.

System % — CPU spent on kernel operations. Unusually high system % often indicates a driver issue, excessive context switching, or a misconfigured filesystem.

I/O Wait % — CPU time spent waiting for disk operations. This is the most commonly missed metric. High I/O wait with low overall CPU means your bottleneck is storage, not compute.

Steal % — In virtualized environments, steal time shows how much CPU the hypervisor took from your VM. Consistently high steal % means your VM is being starved by noisy neighbors.

Alert thresholds:

Warning: CPU > 80% sustained for 10+ minutes

Critical: CPU > 95% sustained for 5+ minutes

I/O Wait: > 20% sustained — investigate storage immediately

Memory Utilization and Swap Usage

What it measures: Physical RAM usage, available memory, buffer/cache usage, and swap activity.

Why it matters: Linux manages memory aggressively — it will use available RAM for disk caching, which means high memory usage isn't always a problem. But high swap usage is always a problem.

Key sub-metrics:

Used memory — Total RAM consumed by processes. Read this alongside "available" memory for the real picture.

Available memory — The amount of memory that can be allocated to new processes without swapping. This is the metric that matters.

Swap used — When Linux runs out of physical RAM, it moves pages to swap (disk). This causes massive performance degradation because disk is 100-1,000x slower than RAM.

Swap activity (si/so) — Pages swapped in and out per second. Active swapping is a red flag even if swap usage is low.

Alert thresholds:

Warning: Available memory < 15% of total RAM

Critical: Active swap usage > 0 with increasing trend

Investigate: Memory usage growing linearly (potential memory leak)

Disk Capacity and I/O Performance

What it measures: Disk space usage, read/write throughput, I/O operations per second (IOPS), and I/O latency.

Why it matters: Disk issues cause the most catastrophic Linux failures. A full filesystem can crash databases, corrupt logs, and halt services entirely. And disk I/O bottlenecks cause performance degradation that's hard to diagnose without the right metrics.

Key sub-metrics:

Disk usage % — Simple but critical. Monitor all mounted filesystems, not just /.

Inode usage — You can run out of inodes (file count limit) even with disk space available. This is a common cause of mysterious "disk full" errors.

Read/write throughput — Sustained high throughput may indicate runaway logging, unoptimized queries, or backup jobs competing with production I/O.

I/O latency — The time it takes to complete a read or write operation. Latency > 10ms on SSD or > 20ms on HDD warrants investigation.

Alert thresholds:

Warning: Disk usage > 80%

Critical: Disk usage > 90%

Warning: Inode usage > 80%

Investigate: I/O latency > 10ms (SSD) or > 20ms (HDD) sustained

Network Throughput and Error Rates

What it measures: Bytes in/out per interface, packet rates, error rates, dropped packets, and connection states.

Why it matters: For DHCP servers, DNS servers, proxies, firewalls, and file servers, network performance is the primary indicator of service health.

Key sub-metrics:

Bytes in/out — Track bandwidth utilization per interface to identify saturation.

Packet errors — Non-zero error rates indicate cable issues, driver problems, or MTU mismatches.

Dropped packets — Drops indicate buffer overflows or network congestion.

TCP connection states — A high count of TIME_WAIT connections may indicate connection leak issues in the application.

DNS resolution time — For DNS servers, response time is the metric that matters to end users.

Alert thresholds:

Warning: Interface utilization > 70% sustained

Critical: Packet error rate > 0.1%

Investigate: Unusual spike in TCP connections or DNS query volume

System Load Average

What it measures: The number of processes in the run queue (waiting for CPU) averaged over 1, 5, and 15 minutes.

Why it matters: Load average gives you a quick sense of whether the system is overloaded, but it needs context. A load of 8.0 on an 8-core server means every core has exactly one process — that's healthy. A load of 8.0 on a 2-core server means 6 processes are waiting for CPU — that's a problem.

Rule of thumb: Load average should stay below the number of CPU cores for sustained periods.

Alert thresholds:

Warning: Load > CPU cores * 1.5 for 10+ minutes

Critical: Load > CPU cores * 3.0 for 5+ minutes

Common Linux Monitoring Issues and How to Fix Them

Issue 1: Monitoring Tool Only Tracks Basic Metrics

Symptom: Dashboard shows everything green, but users report slow performance.

Root cause: The monitoring tool is only checking CPU %, memory %, and disk %. It's missing I/O wait, swap activity, network errors, inode usage, and application-level metrics.

Fix: Configure your monitoring platform to collect the full set of metrics listed above. If your tool doesn't support them, it's time for a better tool.

Issue 2: Alert Thresholds Are Wrong (or Missing)

Symptom: You get no alerts for real issues, or you get so many alerts that the team ignores them.

Root cause: Default thresholds don't match your environment. A database server running at 85% memory might be normal; a web server at 85% memory is a problem.

Fix: Set thresholds per server role. Establish baselines during normal operations, then set warning thresholds at 1.5x baseline and critical at 2x baseline.

Issue 3: No Correlation Between Events

Symptom: When a multi-server application degrades, the team investigates each server independently and can't find the root cause.

Root cause: The monitoring tool treats each server as an island. There's no correlation between a database server's high I/O wait and the web server's increased response time.

Fix: Use a monitoring platform with a correlation engine that can link events across servers, networks, and applications. This is where AI-driven root cause analysis adds significant value.

Issue 4: CLI-Only Monitoring Doesn't Scale

Symptom: The sysadmin knows every server intimately because they SSH into each one and check manually. But they can only handle 20-30 servers. The team just added 50 more.

Root cause: Relying on CLI tools (top, htop, iostat, vmstat, netstat) for day-to-day monitoring instead of an automated platform.

Fix: CLI tools are great for live troubleshooting during an incident. For continuous monitoring, you need a platform that collects metrics automatically, retains history, and alerts without human intervention.

Issue 5: No Syslog Monitoring

Symptom: A security breach or application error goes undetected for days because nobody was watching the logs.

Root cause: Metric-based monitoring catches resource problems. Log-based monitoring catches events — failed login attempts, application exceptions, service restarts, kernel panics.

Fix: Implement syslog monitoring that collects, parses, and alerts on critical log events in real time. Correlate log events with metric anomalies for faster root cause identification.

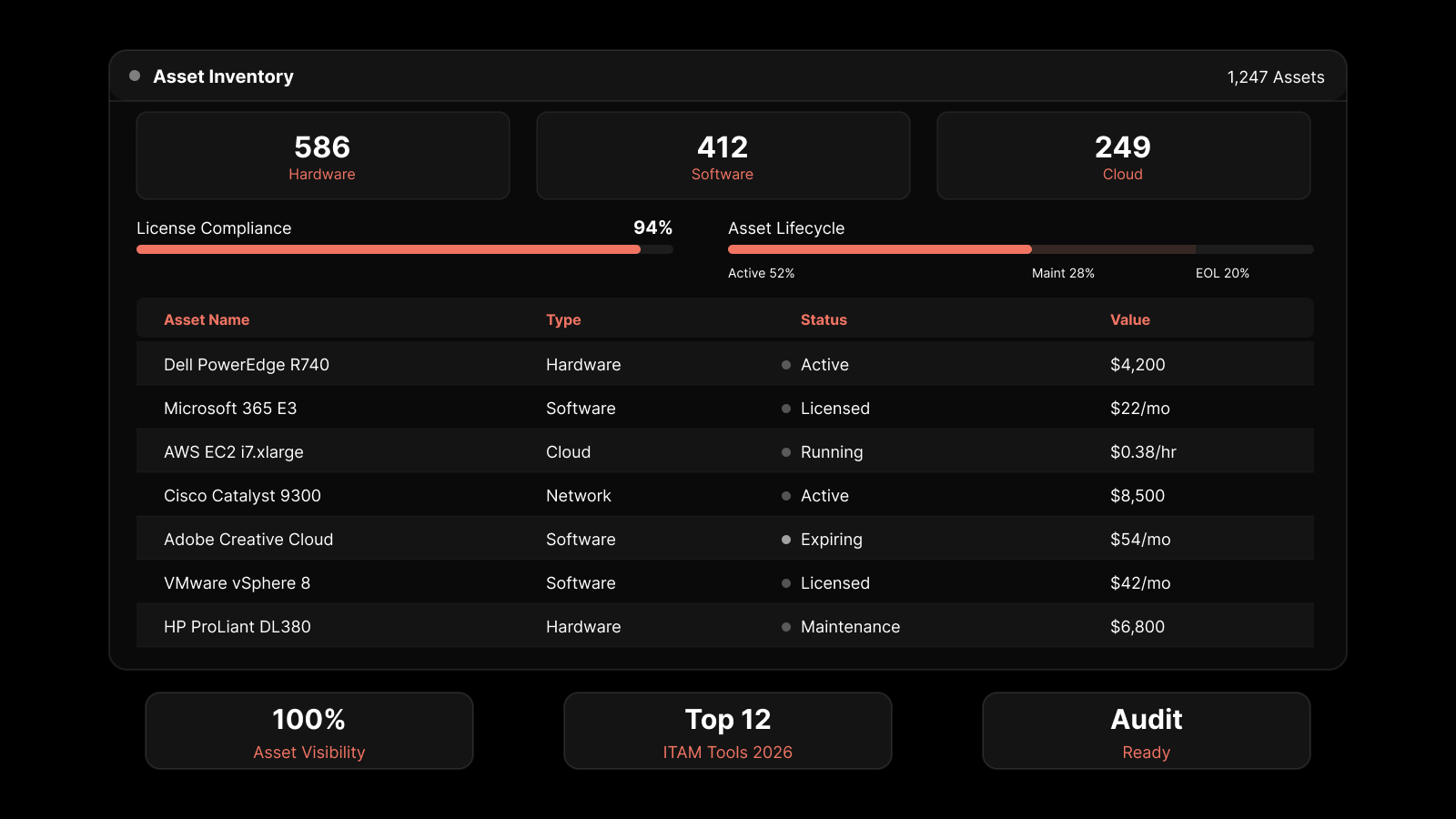

CLI Tools vs. Dedicated Monitoring Platforms

Capability | CLI Tools (top, iostat, vmstat) | Dedicated Monitoring Platform |

|---|---|---|

Real-time view | Yes (live session only) | Yes (dashboard, always-on) |

Historical data | No | Yes (days/weeks/months of trend data) |

Automated alerting | No (requires scripted cron jobs) | Yes (threshold-based, multi-channel) |

Multi-server view | No (one server at a time) | Yes (single pane of glass) |

Correlation | No | Yes (cross-server event correlation) |

Root cause analysis | Manual | AI-assisted |

Log monitoring | Partial (tail/grep) | Full (syslog collection, parsing, alerting) |

Capacity planning | No | Yes (trend projection, forecasting) |

Scales to 100+ servers | No | Yes |

Best use case | Live troubleshooting during incidents | Continuous monitoring and proactive alerting |

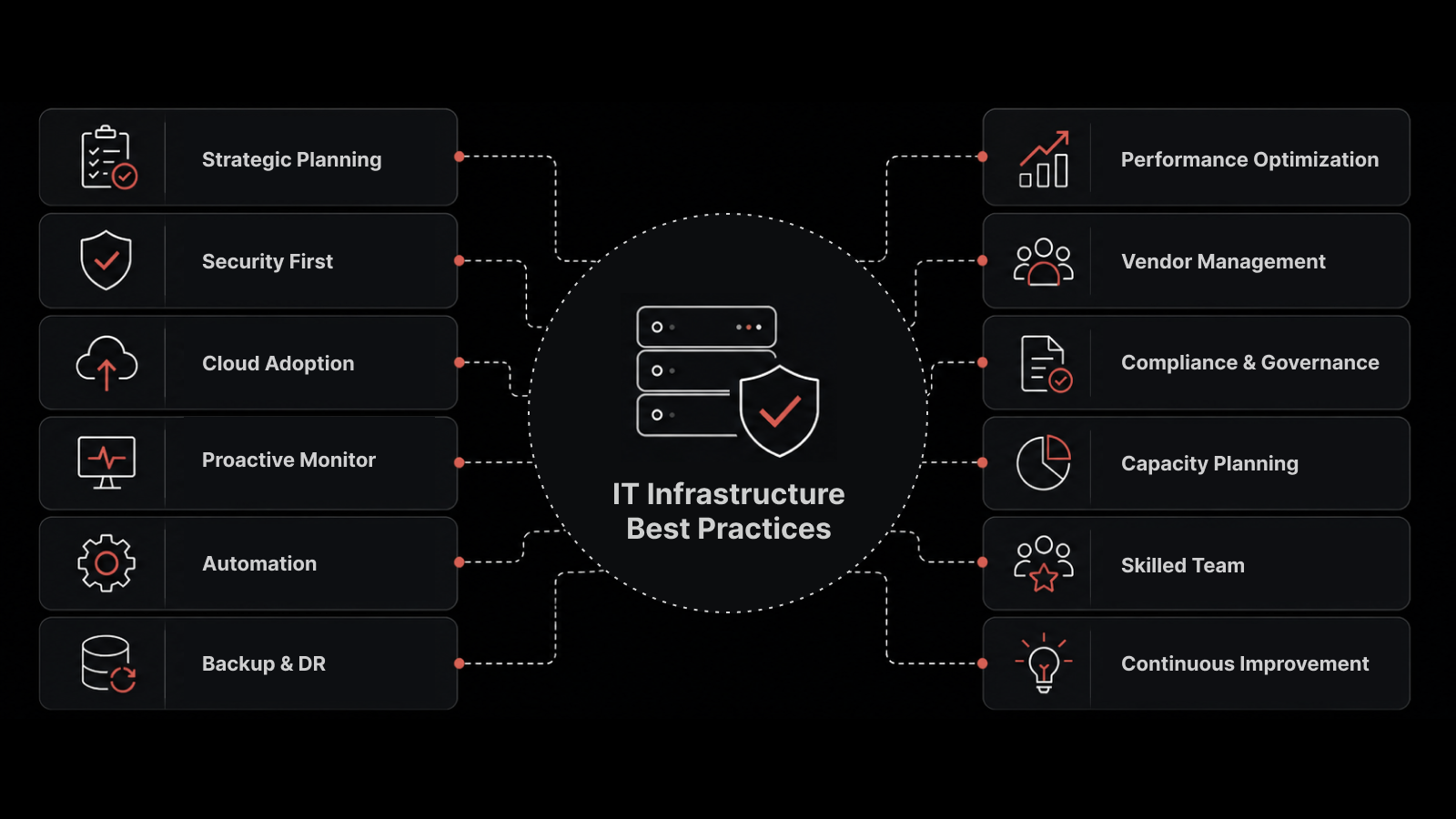

Linux Monitoring Best Practices for 2026

Monitor all five core metric categories — CPU, memory, disk, network, and load. Missing any one creates blind spots.

Set role-specific thresholds — A database server has different normal behavior than a web server. Use baselines, not defaults.

Implement syslog monitoring alongside metric monitoring — Metrics tell you what's happening to the server. Logs tell you what's happening inside the server.

Monitor containers the same way you monitor bare metal — Docker containers and Kubernetes pods need the same depth of monitoring as physical servers.

Track inode usage, not just disk space — Running out of inodes is one of the most common "surprise" failures in Linux environments.

Use AI-driven root cause analysis — When a problem spans multiple servers or layers, manual investigation takes too long. Let the platform correlate events for you.

Retain at least 90 days of historical data — You can't spot trends or establish baselines without history.

Monitor cloud Linux instances with the same depth as on-prem — Don't assume CloudWatch or Azure Monitor covers everything. They typically provide basic metrics only.

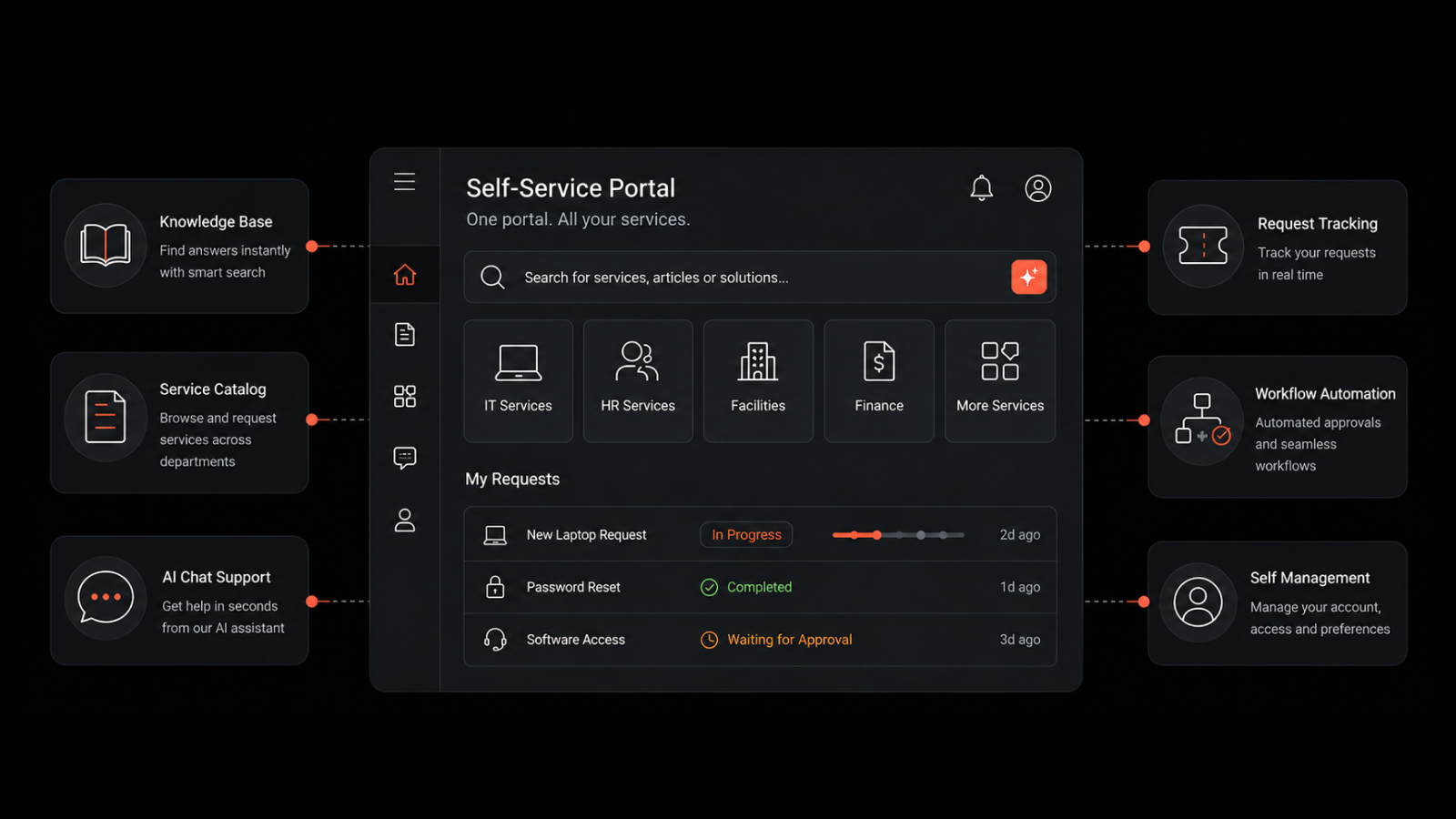

Motadata provides an integrated server monitoring tool that delivers real-time visibility into every critical Linux server performance metric — CPU, memory, disk, network, and load — from a single web console.

With automated discovery, threshold-based alerting, syslog monitoring, root cause analysis, and support for both consolidated and distributed environments, Motadata gives sysadmins and IT operations teams the tools to stay ahead of server issues instead of chasing them.

The platform monitors all types of IT infrastructure servers — physical, virtual, cloud-hosted, and containerized — with the same depth and the same dashboard.

Start your free trial or contact our team to see how Motadata monitors Linux servers.

FAQs

What metrics should you monitor on Linux servers?

Monitor five core categories: CPU (utilization, I/O wait, steal time), memory (available memory, swap usage and activity), disk (capacity, inode usage, I/O latency, throughput), network (bandwidth, errors, drops, connection states), and system load (1/5/15-minute averages relative to CPU core count).

How do you troubleshoot Linux server performance issues?

Start with load average to determine if the system is overloaded. Then check CPU breakdown (user/system/iowait) to identify the bottleneck type. If I/O wait is high, investigate disk with iostat. If memory is the issue, check for swap activity with vmstat. Use syslog analysis to identify application-level errors that might explain the symptoms.

What's the difference between Linux monitoring and Linux observability?

Monitoring collects predefined metrics and alerts when thresholds are crossed. Observability goes further — it collects metrics, logs, and traces, then correlates them to explain why problems occur, not just that they occurred. For complex Linux environments, observability provides faster root cause identification.

How often should Linux server metrics be collected?

For production servers, collect metrics at 60-second intervals minimum. Critical systems may need 30-second or 15-second intervals. Log collection should be real-time (streamed, not batch). Longer intervals save storage but create gaps that hide transient performance issues.

Should you monitor Linux containers differently than VMs?

Containers need the same core metrics (CPU, memory, network, disk I/O) but also container-specific metrics: container restart counts, image pull times, resource limits vs. actual usage, and orchestrator-level metrics like pod scheduling latency and node resource pressure.