IT Operations Transformation at Scale: How to Monitor 2,000+ Devices Across 400 Branches

What Is IT Operations Transformation at Scale?

IT operations transformation at scale is the process of modernizing how large, geographically distributed organizations monitor, manage, and optimize their entire technology infrastructure — from network devices and servers to applications and cloud workloads — through a single, unified platform rather than disconnected point tools.

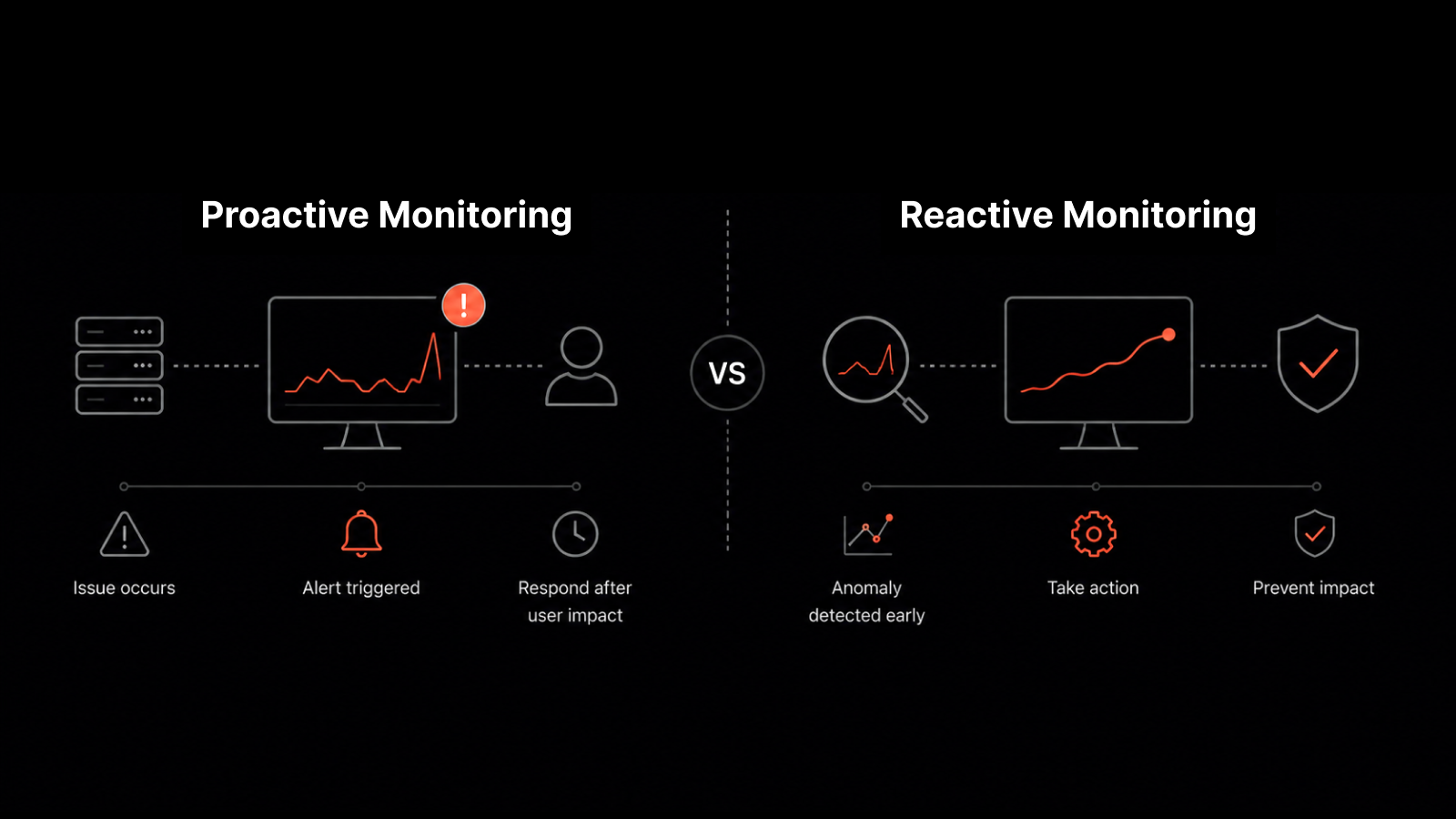

For enterprises running hundreds of branches and thousands of devices, this isn't a nice-to-have. It's the difference between reactive firefighting and proactive operations.

Your IT team is drowning in alerts from six different tools, none of them agree on what's actually broken, and the CFO just asked why the network went down at three branches last Tuesday. Sound familiar? That's exactly the situation India's largest insurance firm faced before it rethought IT operations from the ground up. With 400+ branches, 2,000+ devices, and zero unified visibility, the company needed more than a new tool — it needed a fundamentally different approach to monitoring at scale.

The Scale Problem: Why Large Enterprises Struggle with Network Monitoring

When you operate at scale — 400+ branches spread across an entire country — IT monitoring stops being a technical challenge and becomes an organizational one.

India's largest insurance firm experienced this firsthand. The company's IT network was dense and geographically distributed. Every branch relied on always-on connectivity to process policies, serve customers, and run internal operations. But the IT team had no unified way to see what was happening across this sprawling infrastructure.

Here's what they were dealing with:

No single pane of glass. Performance data lived in silos. Different teams tracked different metrics using different tools, and nobody had a complete picture of network health.

No real-time data. The information available was historical — hours or even days old by the time it reached decision-makers. That made proactive response impossible.

Multi-vendor chaos. Branches had procured hardware from different vendors over the years. Cisco at one location, Radware at another, Checkpoint somewhere else. There was no standardized way to compare performance across device types.

Manual asset management. Every new device, configuration change, or license renewal required manual entry into a central repository. At scale, this meant constant data drift — expired licenses going unnoticed, new devices invisible to the monitoring system.

False alerts from legacy tools. The firm tried integrating third-party alerting tools on top of their existing monitoring solution. The result? A flood of false positives that eroded trust in the alert system entirely.

The cumulative effect was severe: the management team believed that managing IT operations at this scale would require massive capital expenditure — more headcount, more tools, more budget.

They were wrong. What they needed was a different architecture.

Why Traditional Monitoring Fails at Enterprise Scale

Most IT infrastructure monitoring solutions work well enough for small environments. But they break down when you scale past a few hundred devices for predictable reasons:

Polling and data aggregation bottlenecks

Legacy monitoring tools use fixed polling intervals that can't adapt to the size of the network. When you're polling 2,000+ devices every five minutes, you either get stale data or you overload the monitoring system itself.

No correlation engine

When alerts come from multiple disconnected tools, there's no way to correlate events. A switch failure at Branch 47 might cause application timeouts at Branch 48, but without correlation, those show up as two unrelated incidents.

Configuration drift at scale

Manual configuration management is manageable with 50 devices. At 2,000, it's a full-time job — and one that's always behind. Configuration drift leads to security gaps, compliance violations, and performance inconsistencies.

Alert fatigue

When monitoring tools generate noise instead of signal, IT teams stop paying attention to alerts. That's when real incidents get missed.

Lack of predictive capability

Without real-time, normalized data from across the network, there's no foundation for predictive analytics. You can't anticipate failures if your data is stale, fragmented, or inconsistent.

How Unified Network Monitoring Solves the Scale Challenge

The insurance firm evaluated several solutions before selecting Motadata's network monitoring platform. The decision came down to a specific set of capabilities that addressed each of the challenges listed above.

Comprehensive performance analytics across device classes

The platform provided monitoring coverage across hardware, servers, Linux and Windows systems, virtual machines, databases, web servers, and applications — all from a single deployment. No additional tools needed for different device types.

Normalized multi-vendor data

Whether the branch used Cisco, Radware, or Checkpoint equipment, the platform normalized performance data using 100+ pre-defined monitoring templates with out-of-the-box support for all major vendors. This gave the IT team apples-to-apples comparisons across the entire network.

Contextual root cause analysis

Every metric came with contextual data that allowed IT teams to perform root cause analysis quickly. Instead of investigating each alert in isolation, the team could trace correlations and identify systemic issues before they cascaded.

Auto-discovery for zero manual input

The auto-discovery feature scanned the network and automatically cataloged every device across all geographies and IT asset types. No more manual entry. No more expired licenses going unnoticed. No more phantom devices.

Before and After: Measurable Outcomes of IT Operations Transformation

Metric | Before Motadata | After Motadata |

|---|---|---|

Visibility | Fragmented across tools | Single pane of glass for 2,000+ devices |

Data freshness | Historical (hours/days old) | Real-time |

MTTR | Hours to days | Reduced by 60%+ with incident management metrics tracking |

Asset tracking | Manual, error-prone | Automated auto-discovery |

Alert quality | High false-positive rate | Contextual, threshold-based alerts |

Vendor coverage | Inconsistent | 100+ OOB templates for major vendors |

Configuration management | Manual updates per device | Centralized network configuration management |

Network topology | No visibility into dependencies | Dynamic L2/L3 topology maps |

Predictive capability | None | AI-driven analytics from real-time data |

Tools required | Multiple point solutions | One unified platform |

Network Topology Mapping and Real-Time Dependency Visualization

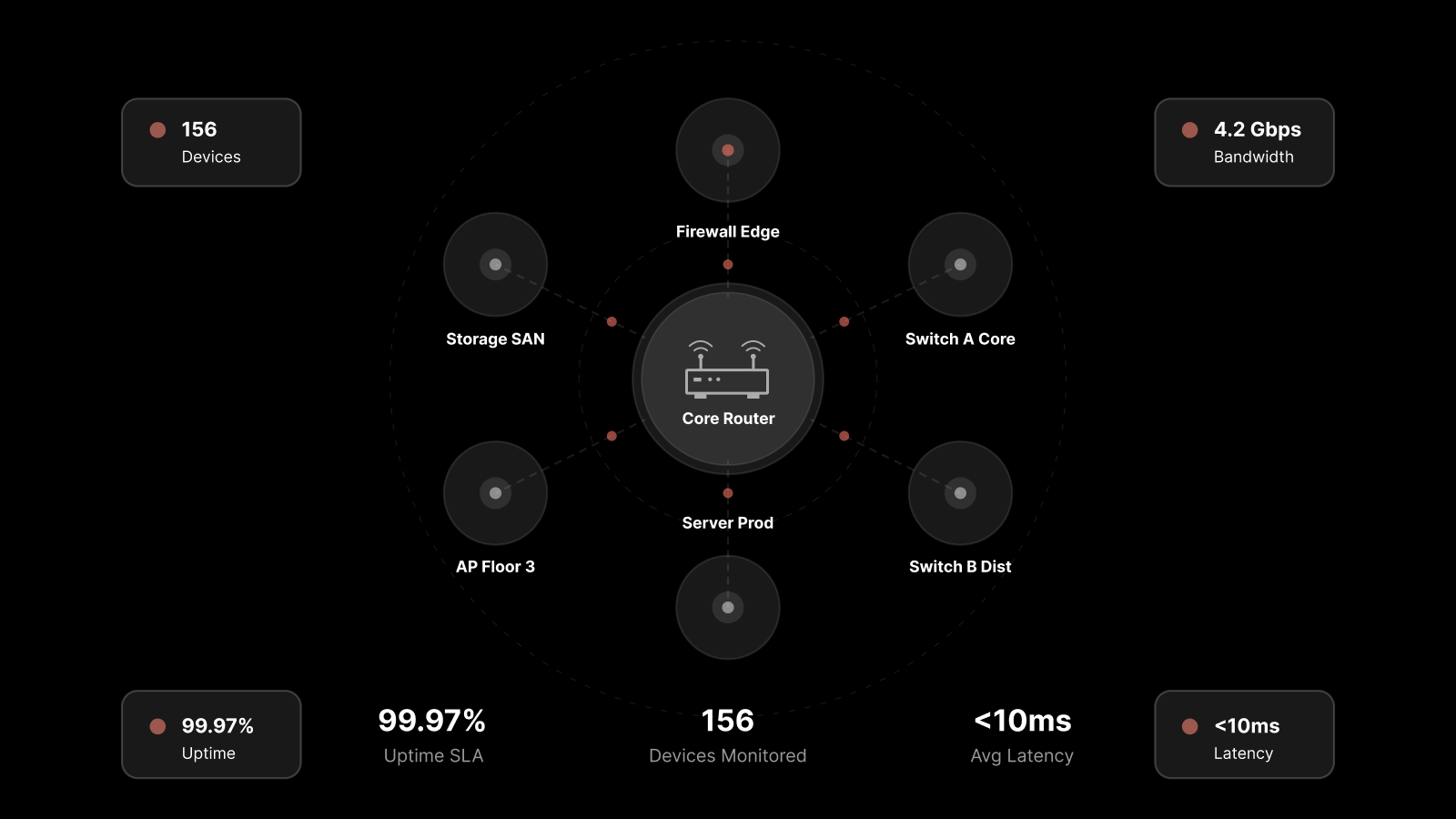

One of the most immediate wins was network topology visualization. The platform displayed the entire network as a dynamic topographic map, showing relationships and dependencies across all 400+ branches.

The IT team could see real-time L2 and L3 views, which meant:

Faster fault isolation. When a branch reported connectivity issues, the team could visually trace the path from the branch to the data center and pinpoint exactly where the failure occurred.

Dependency awareness. Understanding which devices depended on which upstream components eliminated guesswork during outage response.

Capacity planning. Visual network maps made it obvious where bottlenecks existed and where capacity upgrades would have the biggest impact.

This alone cut incident investigation time significantly, because the IT team no longer had to mentally reconstruct the network topology from spreadsheets and CLI output.

Proactive Alerting and Notification Architecture

The platform replaced the firm's false-alert-prone legacy system with a sophisticated, threshold-based alerting engine.

How it works:

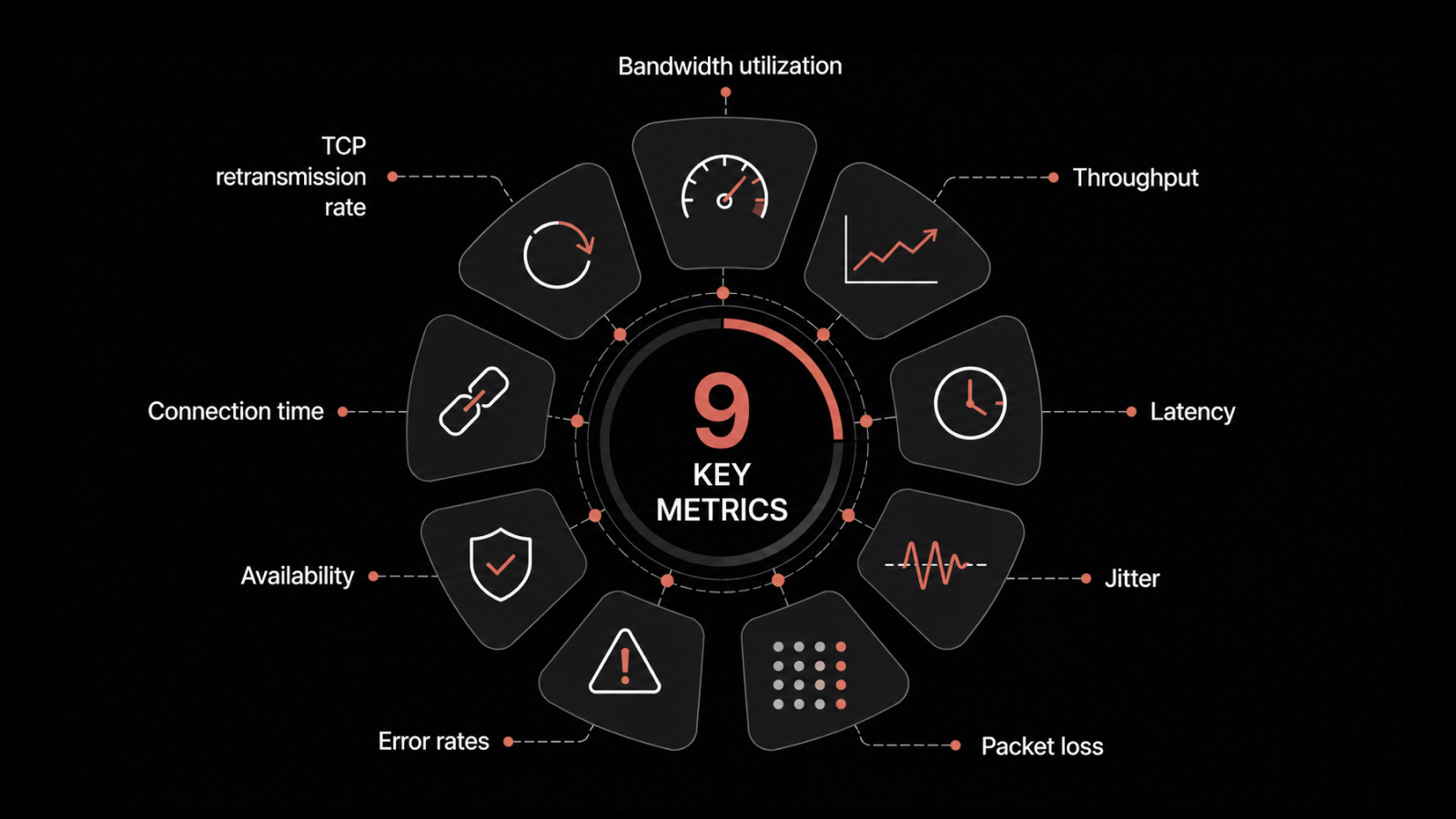

The IT team sets performance thresholds for each device class and metric (CPU utilization, memory, bandwidth, etc.).

When any metric crosses its threshold, the platform triggers alerts through multiple channels — email, SMS, Slack, and other collaboration tools.

Each alert includes contextual information: what's affected, what the probable cause is, and what related metrics look like.

The alerting system uses historical patterns to distinguish between genuine anomalies and expected spikes, drastically reducing false positives.

The result was a notification system that the team actually trusted — and that trust translated into faster response times and fewer missed incidents.

Reducing MTTR with Contextual Root Cause Analysis

Mean time to repair (MTTR) dropped dramatically after deployment. Here's why:

Before: When an incident occurred, the IT team had to manually query multiple tools, pull data from different systems, and piece together a theory about the root cause. This process could take hours — sometimes days — for complex, multi-branch issues.

After: The platform's root cause analysis engine correlated events across the entire network automatically. When a storage array at the data center started showing elevated latency, the platform connected that to the application slowdowns reported at twelve branches — before the IT team even started investigating.

This contextual intelligence turned IT from a reactive break-fix function into a proactive operations team that could identify and address systemic issues before they became outages.

SLA Management and Continual Service Improvement

With all performance data flowing through a single platform, the management team gained something they'd never had before: clear visibility into SLA compliance across the entire network.

The platform's reporting module delivered:

SLA dashboards showing uptime compliance by branch, region, and device class

Trend analysis highlighting branches or device types that were trending toward SLA violations

Automated reports sent to technicians, managers, and process owners on a scheduled basis

Predictive notifications that flagged potential SLA breaches before they happened

This moved the organization from measuring SLA compliance retroactively to managing it proactively — a meaningful shift for an enterprise where network uptime directly impacts revenue.

Transform Your IT Operations with Motadata

If your IT team is managing a distributed network with multiple monitoring tools, inconsistent data, and too many false alerts, you're not alone — and you don't need to throw more budget at the problem.

Motadata's AIOps platform gives you unified visibility across your entire infrastructure — network devices, servers, applications, cloud workloads, and more — from a single deployment. With AI-driven root cause analysis, auto-discovery, and proactive alerting, you can monitor thousands of devices across hundreds of locations without adding headcount.

Start your free trial or contact our team to see how Motadata can transform your IT operations at any scale.

FAQs

How do you scale IT operations across hundreds of locations?

Scaling IT operations requires three things: a unified monitoring platform that provides a single pane of glass view, auto-discovery that eliminates manual device tracking, and a correlation engine that can connect events across distributed locations. Without these, you're running hundreds of independent IT environments instead of one cohesive operation.

What is single pane of glass monitoring?

Single pane of glass monitoring means viewing all IT infrastructure performance data — servers, network devices, applications, virtual machines, databases — from one unified dashboard. It eliminates the need to switch between multiple tools and ensures that every stakeholder sees the same data.

How can network monitoring reduce MTTR?

Network monitoring reduces MTTR by providing real-time visibility, automated root cause analysis, and contextual alerting. When the monitoring platform can correlate events across the network and surface the probable cause alongside the alert, IT teams skip the investigation phase and go straight to remediation.

What's the ROI of unified IT infrastructure monitoring?

Organizations that consolidate from multiple point monitoring tools to a unified platform typically see 40-60% reduction in MTTR, near-elimination of unplanned downtime, and 30-50% reduction in monitoring tool costs. The ROI compounds over time as the IT team shifts from reactive to proactive operations.