Top Concerns IT Admins Have About AIOps -- And How to Address Them

Amartya Gupta

AIOps promises to transform IT operations by applying artificial intelligence and machine learning to the flood of operational data that modern infrastructure generates. The pitch is compelling: automated anomaly detection, faster incident resolution, predictive maintenance, and fewer 3 AM pages. According to Gartner, by 2026, over 70% of enterprises will have adopted some form of AIOps capability in their monitoring and management stacks.

But for the IT admins who actually have to implement, operate, and trust these systems, the promises come with real concerns. Will the AI actually work with our data? Can we trust automated decisions in production? What happens to our existing workflows? And honestly -- is this going to replace my job?

These concerns aren't unfounded. They're practical questions that deserve honest answers. This guide addresses the most common concerns IT administrators raise about AIOps and provides actionable guidance for navigating them.

AIOps (Artificial Intelligence for IT Operations) is the application of machine learning and data analytics to IT operations data -- including metrics, logs, events, and traces -- to automate anomaly detection, root cause analysis, event correlation, and incident response.

Key Takeaways

AIOps is a tool for IT admins, not a replacement for them. It handles data processing at scale while humans make judgment calls.

Data quality is the single biggest factor in AIOps success or failure. Garbage in, garbage out applies with full force.

Alert fatigue can actually get worse with AIOps if threshold tuning and noise suppression aren't configured carefully.

Start with focused, high-value use cases rather than trying to automate everything at once.

The skill gap is real, but upskilling existing IT staff is more practical (and more effective) than hiring entirely new teams.

Why AIOps Matters for Modern IT Operations

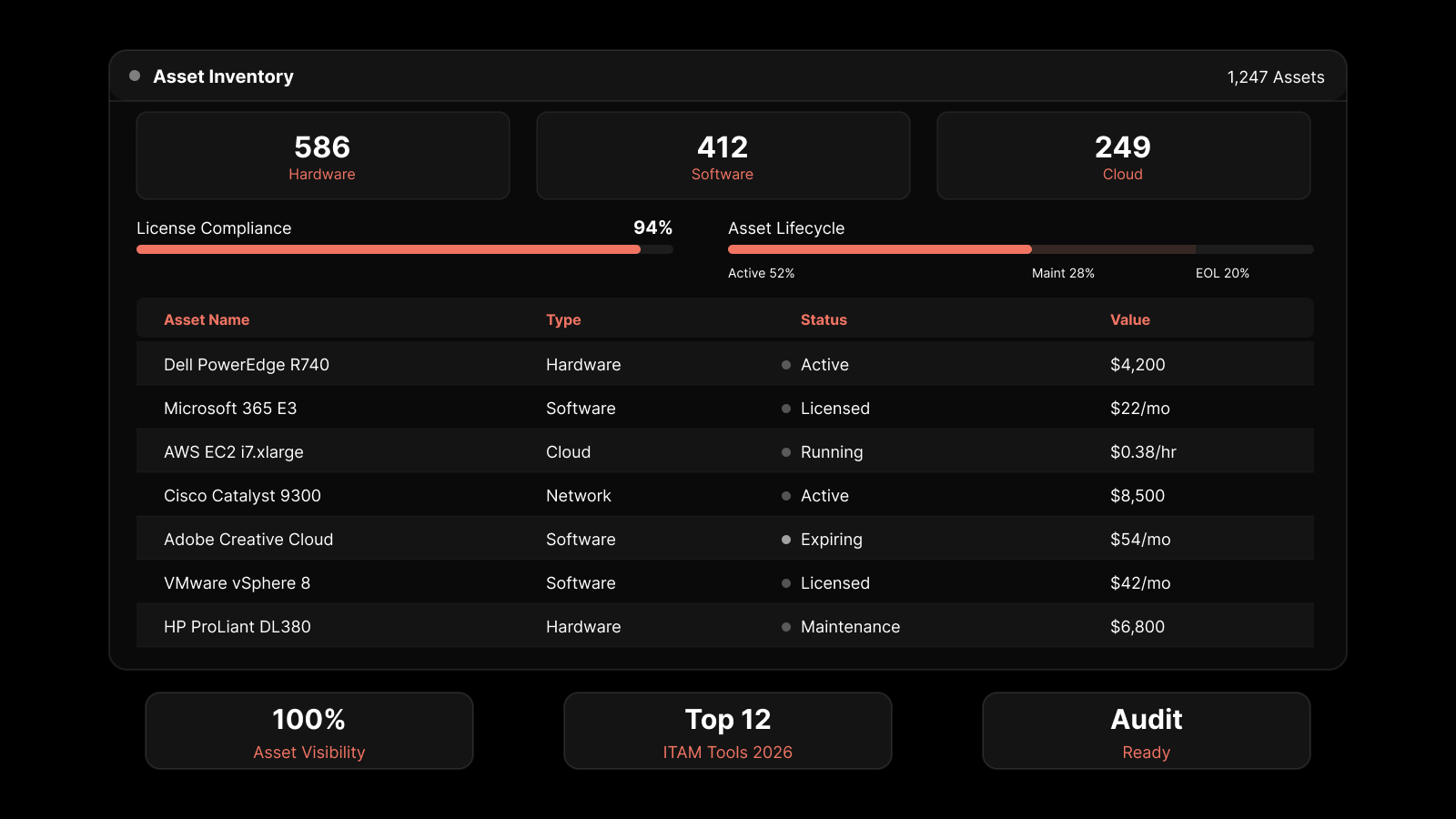

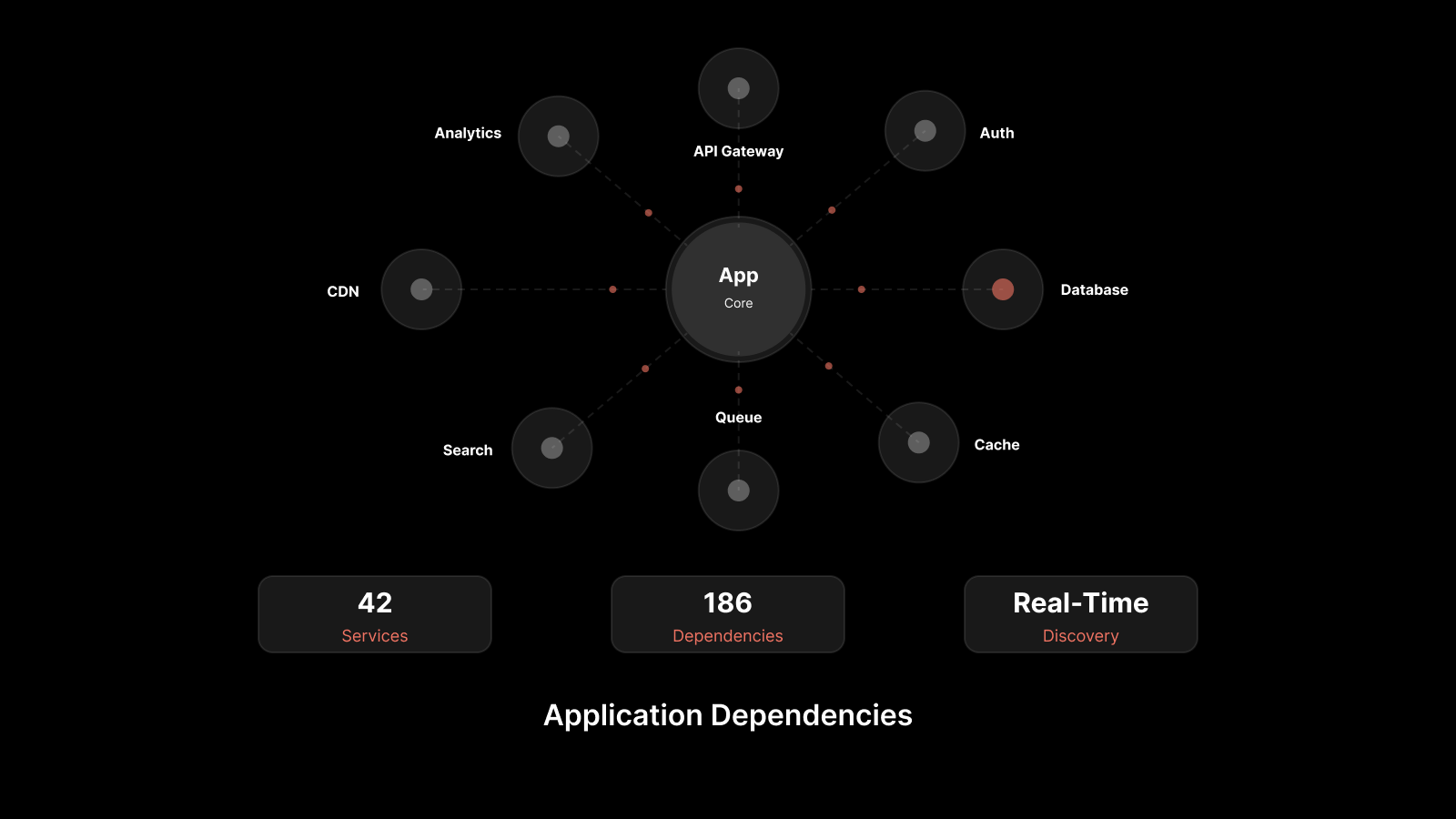

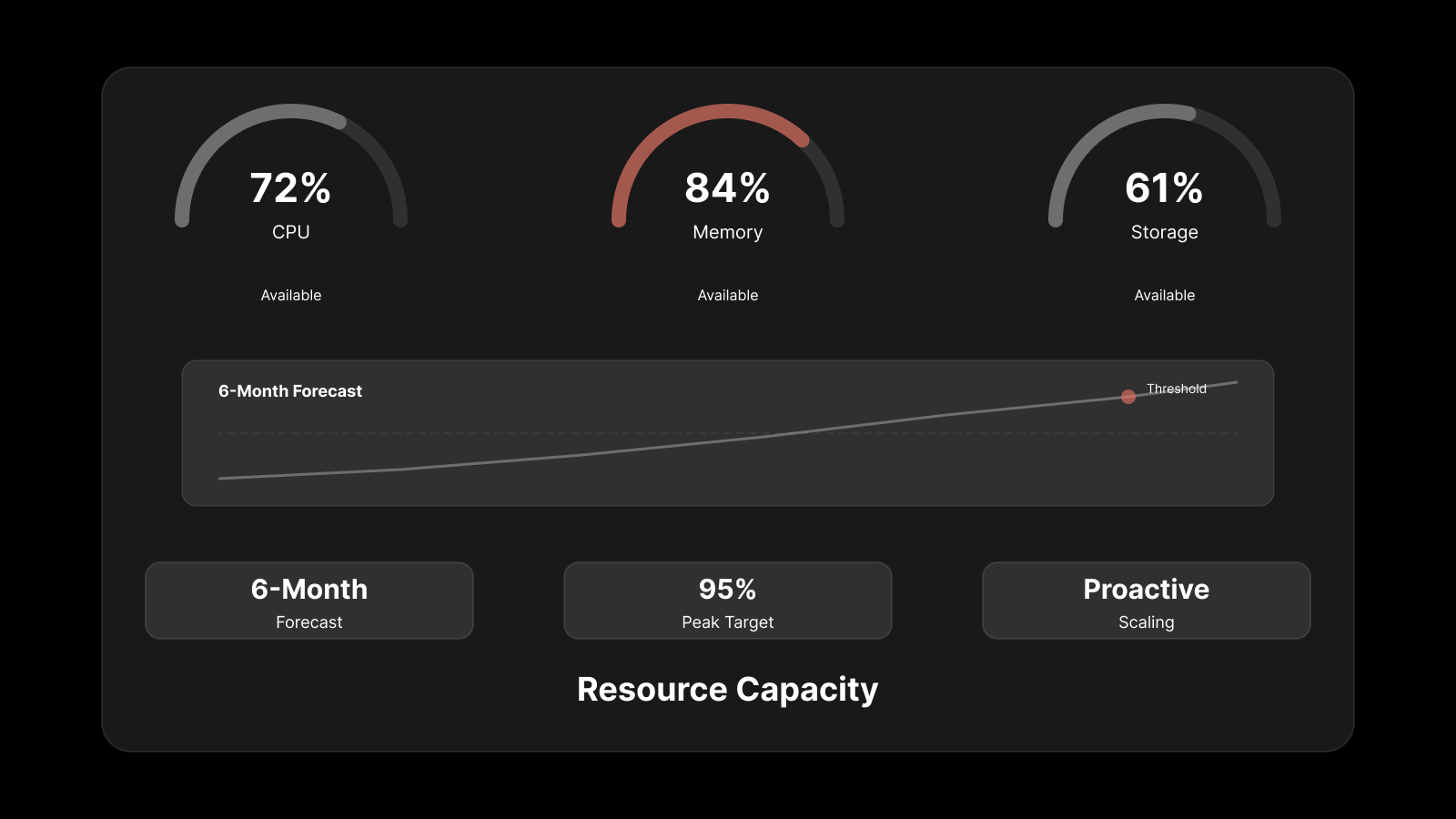

The scale and complexity of modern IT infrastructure have outpaced human capacity for manual monitoring. A mid-sized enterprise generates millions of events, metrics, and log entries per day across servers, network devices, cloud workloads, containers, and applications. No team of human operators can process that volume in real time.

DevOps practices accelerated deployment velocity, but they also increased the operational surface area. More deployments mean more potential failure points, more configuration changes, and more performance variables to track.

AIOps addresses this by applying machine learning to operational data at scale. It can correlate thousands of alerts into a single incident, detect anomalous patterns that manual review would miss, and identify root causes across distributed systems faster than human investigation allows.

The value is real -- but so are the implementation challenges. Here's what IT admins are most concerned about.

Concern 1: Data Quality and Quantity

The worry: "Our monitoring data is messy, incomplete, and scattered across different tools. How can AI produce good results from bad data?"

This is the most legitimate concern on the list. Machine learning models are only as good as the data they're trained on. If your telemetry data is inconsistent, fragmented across siloed tools, or full of gaps, AIOps models will produce unreliable predictions and inaccurate correlations.

How to address it:

Audit your data sources before deploying AIOps. Identify gaps in monitoring coverage, inconsistent data formats, and stale configurations.

Standardize log formats and metric naming conventions across your infrastructure. Structured logging (JSON with consistent field names) dramatically improves AI model accuracy.

Start AIOps with your highest-quality data sources first. As the platform proves value with clean data, extend coverage to more complex sources incrementally.

Treat data quality as an ongoing practice, not a one-time project. Assign ownership for monitoring data quality just as you would for application code quality.

Concern 2: Alert Fatigue Getting Worse, Not Better

The worry: "We're already drowning in alerts. Won't AI just generate even more noise?"

This concern is valid because poorly configured AIOps can indeed make alert fatigue worse. If the system generates low-confidence predictions, fails to deduplicate correlated alerts, or doesn't learn from false positives, operators end up with more noise than they started with.

How to address it:

Choose an AIOps platform with strong alert correlation and suppression capabilities. The system should consolidate related alerts into a single incident, not multiply them.

Configure confidence thresholds carefully. Start with high-confidence alerts only and lower the threshold gradually as you validate the system's accuracy.

Implement feedback loops. When an AI-generated alert is a false positive, mark it as such so the model learns and improves.

Review alert reduction metrics regularly. A well-tuned AIOps platform should reduce your total alert volume by 60-90%, not increase it.

Concern 3: Trusting Automated Decisions in Production

The worry: "I'm not comfortable letting an AI make changes to production systems. What if it gets it wrong?"

This is a reasonable concern, and the answer is: don't let it. At least not initially. The most successful AIOps implementations follow a graduated autonomy model:

Phase 1 -- Advisory mode: AI detects anomalies and recommends actions, but humans review and approve every change. This builds trust and validates the system's judgment.

Phase 2 -- Supervised automation: AI can execute low-risk automated responses (restarting a non-critical service, scaling a resource) with human oversight. High-risk actions still require approval.

Phase 3 -- Autonomous operations: For well-understood, repeatedly validated scenarios, AI handles detection and remediation independently. Humans focus on novel problems, architectural decisions, and strategic improvements.

Most organizations stay in Phase 1-2 for the first 6-12 months. That's fine. Trust is earned through consistent accuracy, not mandated by implementation timelines.

Concern 4: Skill Gaps and Learning Curve

The worry: "My team manages networks and servers. We're not data scientists. How are we supposed to operate an AI platform?"

Modern AIOps platforms are designed for IT practitioners, not data scientists. You don't need to build or train machine learning models. The platform handles model training, tuning, and updating internally.

That said, your team does need to develop new skills:

Understanding AI outputs: Learning to interpret confidence scores, anomaly severity ratings, and correlation results so they can make informed decisions.

Feedback and tuning: Knowing how to provide feedback on false positives and false negatives so the system improves over time.

Data pipeline management: Understanding how monitoring data flows from sources through collection, processing, and analysis.

How to address it:

Invest in vendor-provided training programs. Most AIOps vendors offer certification paths designed for IT operations professionals.

Start with a small pilot team that builds expertise and can train the broader team.

Choose a platform with an intuitive interface that presents AI insights in operational language, not data science terminology.

Concern 5: Integration With Existing Tools and Workflows

The worry: "We've spent years building our monitoring and incident response workflows. AIOps better work with what we have, not replace everything."

Valid. An AIOps platform that requires you to rip and replace your existing monitoring stack creates more disruption than value. The right approach is augmentation, not replacement.

How to address it:

Evaluate AIOps platforms based on their integration ecosystem. Look for pre-built connectors to your existing network monitoring tools, ITSM platforms, logging solutions, and communication channels.

Ensure the platform can ingest data from your current monitoring tools via APIs, webhooks, syslog, SNMP, or agent-based collection.

Map your existing incident response workflows and verify that the AIOps platform can plug into them -- routing alerts to the right teams, creating tickets in your ITSM system, and triggering your existing runbooks.

Concern 6: Cost and ROI Uncertainty

The worry: "AIOps platforms aren't cheap. How do I justify the investment when I can't predict the ROI?"

This is a budget reality that every IT admin faces. AIOps platforms have licensing costs, implementation effort, and ongoing tuning requirements. The ROI needs to be quantifiable.

How to address it:

Baseline your current operational costs before deploying AIOps: mean time to detect (MTTD), mean time to resolve (MTTR), number of incidents per month, hours spent on manual investigation, and downtime costs.

Set specific, measurable targets: "Reduce MTTR by 30%," "Cut alert volume by 50%," "Prevent X hours of unplanned downtime per quarter."

Start with a focused use case that has clear cost impact. For example, if your team spends 20 hours per week on manual log analysis, automating that single workflow creates a measurable time savings to quantify.

Track ROI quarterly and share results with stakeholders.

Concern 7: Job Displacement Fears

The worry: "If AI handles monitoring and incident response, what's left for the operations team?"

Plenty. AIOps automates the repetitive, data-intensive aspects of IT operations -- correlating thousands of alerts, searching through millions of log entries, and detecting patterns in metrics data. These are tasks that consume time without requiring human judgment.

What AIOps doesn't do: make architectural decisions, design monitoring strategies, handle novel incidents, communicate with business stakeholders, manage vendor relationships, or improve operational practices.

IT admins who adopt AIOps typically find that their role evolves from reactive firefighting to proactive engineering. They spend less time on repetitive investigation and more time on improvements that prevent incidents from occurring in the first place.

Concern 8: Building ML Models for IT Operations Is Hard

The worry: "Our environment is unique. How can a generic AI model understand our specific infrastructure?"

This concern comes from early AIOps experiences where organizations attempted to build custom ML models from scratch. That approach required data science expertise, large labeled datasets, and significant development effort -- resources most IT teams don't have.

Modern AIOps platforms use pre-trained models that adapt to your environment through continuous learning. The platform establishes baselines specific to your infrastructure, learns your normal operational patterns, and refines its models based on the data flowing through your systems.

How to address it:

Choose platforms with adaptive learning that don't require manual model training.

Feed the platform comprehensive, high-quality data from the start -- the more representative the data, the faster the models adapt.

Provide consistent feedback on predictions and alerts so models calibrate to your environment's specific characteristics.

Expect a learning period of 2-4 weeks before predictions become reliable for your specific environment.

See What AIOps Can Do for Your Operations With Motadata

Motadata's AIOps platform brings AI-powered anomaly detection, intelligent alert correlation, and predictive analytics to your IT operations without requiring data science expertise. Built for IT practitioners, Motadata integrates with your existing monitoring and ITSM tools, ingests data from across your infrastructure, and surfaces the insights your team needs to resolve issues faster and prevent outages. With an intuitive interface, adaptive learning models, and graduated automation controls, Motadata gives you AIOps that works with your workflow -- not against it. Start a free trial and experience the difference.

FAQs

What is AIOps?

AIOps (Artificial Intelligence for IT Operations) applies machine learning and analytics to IT operational data to automate key processes: anomaly detection, event correlation, root cause analysis, and incident response. It processes the massive volume of metrics, logs, and events that modern infrastructure generates, surfacing actionable insights that human operators can act on.

What are the main challenges of implementing AIOps?

The primary challenges are data quality (inconsistent or incomplete monitoring data), alert tuning (preventing AI from increasing noise instead of reducing it), skill development (training IT staff to work with AI-driven insights), integration complexity (connecting AIOps with existing tools and workflows), and trust building (gaining confidence in AI recommendations before enabling automation).

How does AIOps differ from traditional monitoring?

Traditional monitoring collects metrics and checks them against static thresholds. AIOps adds machine learning to detect anomalies that static thresholds miss, correlate related events across systems, predict failures before they occur, and automate routine investigation tasks. Traditional monitoring tells you something is wrong; AIOps helps you understand why and what to do about it.

Will AIOps replace IT operations teams?

No. AIOps automates data-intensive, repetitive tasks -- alert correlation, log analysis, pattern detection -- that consume operations team time without requiring human judgment. It frees IT staff to focus on higher-value work: architectural improvements, novel problem solving, vendor management, and operational strategy. The role evolves from reactive firefighter to proactive engineer.

How do I get started with AIOps?

Start with a focused use case that addresses your biggest operational pain point -- typically alert noise reduction or automated anomaly detection. Audit your monitoring data quality, select a platform that integrates with your existing tools, run a pilot with a small team, and expand based on demonstrated results. Resist the temptation to automate everything at once; incremental adoption builds trust and delivers measurable wins.