Centralized Logging and the Big Data Challenge: How to Tame Log Sprawl at Scale

Amartya Gupta

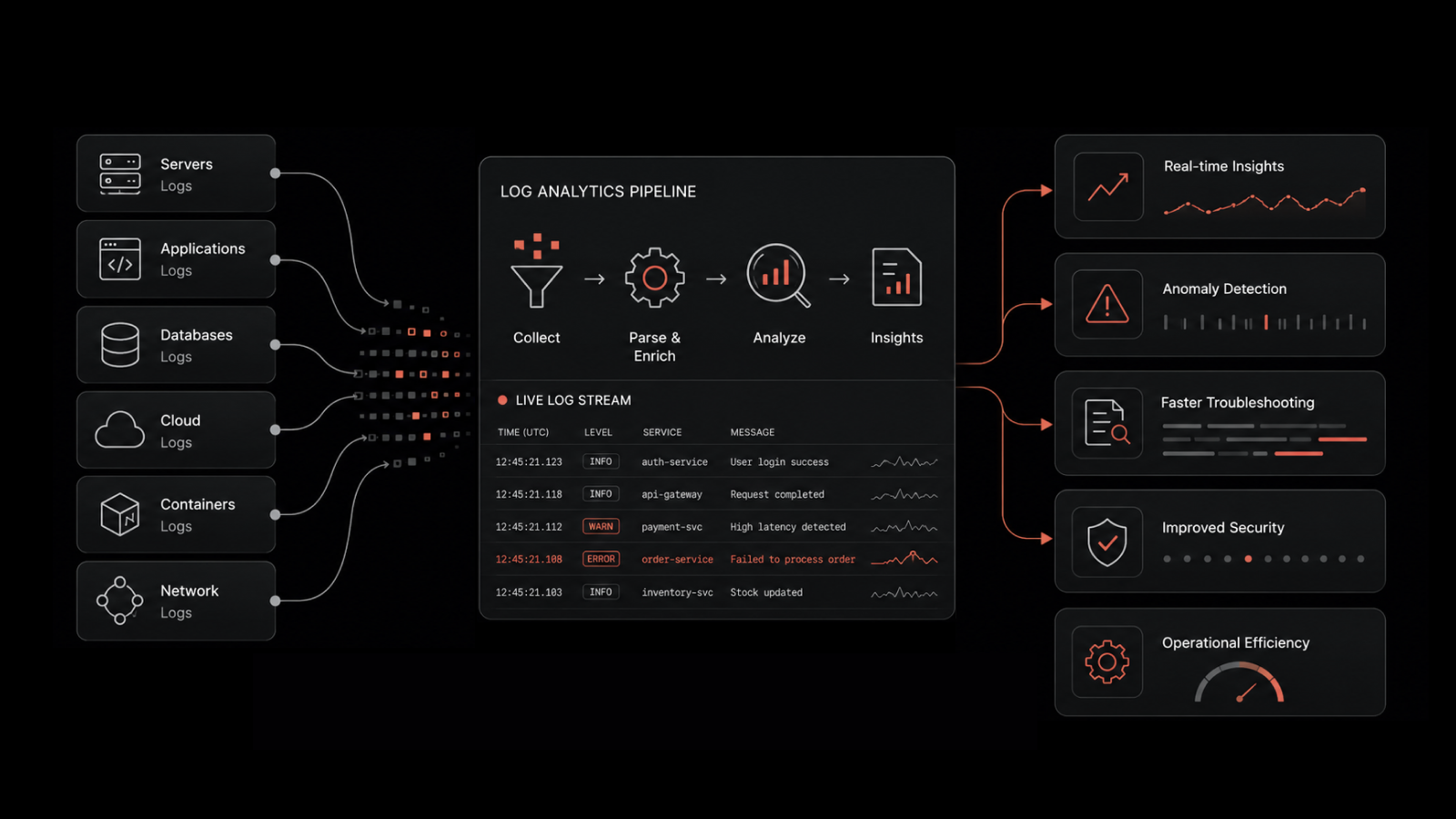

Modern IT environments generate millions of log entries every hour. Between application servers, network devices, cloud workloads, and security appliances, the volume of log data has grown from a manageable stream to an overwhelming flood. For IT teams tasked with maintaining uptime, meeting compliance mandates, and troubleshooting incidents fast, centralized logging isn't optional -- it's the foundation of operational visibility.

But here's the problem: collecting logs is easy. Making them useful at scale is where most organizations struggle. That's the big data challenge of centralized logging, and it's one that every growing IT operation needs to solve.

Centralized logging is the practice of aggregating log data from all infrastructure components -- servers, applications, network devices, and cloud services -- into a single, searchable repository for analysis, correlation, and compliance reporting.

Why Centralized Logging Matters More Than Ever

Log data used to serve one purpose: troubleshooting. When something broke, engineers would dig through text files to find the error. That approach worked when infrastructure was simple and the number of log sources was small.

Today's reality is different. Organizations run hybrid environments spanning on-premises data centers, public cloud platforms, containers, and SaaS applications. Each component generates its own log format, at its own volume, on its own schedule.

Without centralization, log data stays siloed. When an incident occurs, engineers spend more time locating the right logs than actually diagnosing the problem. According to industry research, IT teams spend up to 30% of their troubleshooting time just finding relevant log entries across scattered systems.

Centralized logging solves this by creating a single source of truth. Every log entry -- whether it comes from an Apache web server, a Kubernetes pod, a firewall, or an AWS Lambda function -- lands in one repository where it can be searched, filtered, correlated, and analyzed.

Beyond troubleshooting, centralized logs support proactive monitoring, capacity planning, security incident investigation, and compliance auditing. They're the connective tissue of modern IT operations.

The Big Data Problem: Why Log Management Gets Hard at Scale

The "big data" label isn't hyperbole when it comes to logs. A mid-sized enterprise can easily generate 50-100 GB of log data per day. At that volume, three challenges emerge:

Storage and retention. Raw logs consume significant storage, and compliance mandates often require retaining logs for months or years. Without compression, indexing, and tiered storage strategies, costs spiral.

Signal-to-noise ratio. The vast majority of log entries are routine. Finding the five entries that matter among millions of routine messages is the classic needle-in-a-haystack problem. Without filtering, tagging, and intelligent parsing, analysts drown in noise.

Speed of analysis. When an incident is in progress, you can't wait 10 minutes for a query to return results. Log management platforms need to support near-real-time search and correlation across high-volume data streams.

These challenges compound as organizations add more infrastructure. What works for 10 servers falls apart at 500. What works for a single cloud provider breaks when you go multi-cloud. The big data challenge isn't just about volume -- it's about making that volume useful under pressure.

Building a Centralized Log Management Architecture

A well-designed centralized logging architecture has four layers, each serving a distinct function:

Collection layer. Agents, syslog forwarders, and API integrations pull log data from every source in your environment. The collection layer needs to handle structured logs (JSON, key-value pairs), semi-structured logs (syslog), and unstructured logs (plain text) without dropping entries during peak volume.

Transport layer. Collected logs need to move from source to storage reliably. Message queues and buffering mechanisms ensure that network interruptions or ingestion spikes don't result in lost data.

Storage and indexing layer. This is where logs are parsed, indexed, and stored for retrieval. Effective indexing enables sub-second search across billions of entries. Retention policies manage storage costs by archiving or deleting logs based on age and importance.

Analysis and visualization layer. Dashboards, alerting rules, and correlation engines turn stored logs into actionable insights. This layer is where log monitoring capabilities surface anomalies, trigger alerts, and provide the context engineers need to resolve incidents.

Each layer needs to scale independently. A spike in log volume shouldn't crash your analysis engine, and a complex query shouldn't slow down ingestion.

Compliance and Regulatory Requirements for Log Management

For many organizations, centralized logging isn't just an operational best practice -- it's a regulatory requirement. Multiple compliance frameworks mandate log collection, retention, and review:

PCI DSS requires logging all access to cardholder data environments and retaining logs for at least one year, with 90 days immediately available for analysis.

HIPAA mandates audit controls that record and examine activity in systems containing electronic protected health information (ePHI).

SOX requires companies to maintain audit trails for financial systems, including access logs and change records.

FISMA establishes security standards for federal information systems, including continuous monitoring and log review requirements.

ISO 27001/27002 recommends centralized event logging as part of information security management, covering access events, administrative activities, and fault logging.

Meeting these requirements without centralized logging is nearly impossible. Auditors need to see that logs are collected consistently, stored securely, retained for the required duration, and reviewed regularly. A centralized platform with built-in retention policies, access controls, and audit reports makes compliance demonstrable rather than aspirational.

Best Practices for Centralized Logging at Scale

Getting centralized logging right requires planning beyond just deploying a tool. These practices separate effective log management from log hoarding:

Define what you're logging and why. Not every log source needs the same treatment. Prioritize security-critical systems, customer-facing applications, and compliance-scoped infrastructure. Document your log sources, retention requirements, and analysis objectives before you start collecting.

Standardize log formats. Mixed formats slow down analysis. Where possible, use structured logging (JSON) with consistent field names for timestamps, severity levels, source identifiers, and event types. Structured logs are dramatically faster to search, filter, and correlate.

Set up log correlation rules. Individual log entries rarely tell the full story. Correlation rules connect related events across systems -- linking a failed login attempt in an authentication log with a firewall block in a network log, for example. This cross-system visibility is where centralized logging delivers its highest value.

Implement role-based access controls. Log data often contains sensitive information: IP addresses, usernames, API keys, and system configurations. Restrict access based on role and need. Security teams, compliance auditors, and operations engineers should each see only the logs relevant to their function.

Monitor your monitoring. Log ingestion pipelines can fail silently. Set up health checks on your collection agents, transport layer, and storage systems. If a critical log source goes quiet, you need to know immediately -- not during the next audit.

Plan for growth. Log volumes grow with your infrastructure. Choose a platform that scales horizontally, supports tiered storage (hot/warm/cold), and provides cost visibility so you can forecast expenses as data volumes increase.

How AI-Powered Log Analysis Changes the Game

Traditional log analysis relies on keyword searches and manual pattern matching. An engineer writes a query, scans the results, and draws conclusions. That approach works for known issues with predictable signatures.

AI-powered log management tools go further. Machine learning models can baseline normal log patterns and flag deviations automatically -- even when the engineer doesn't know what to look for. This capability is especially valuable for:

Anomaly detection: Identifying unusual patterns in log volume, error rates, or access behavior that could indicate a security breach, misconfiguration, or emerging performance issue.

Root cause correlation: Automatically connecting related log entries across multiple systems to surface the root cause of an incident, cutting mean time to resolution significantly.

Predictive alerting: Recognizing patterns that historically precede outages or performance degradation and alerting teams before the impact reaches users.

Noise reduction: Suppressing routine, low-value log entries so that analysts can focus on the events that actually require attention.

The shift from reactive log searching to proactive log intelligence is one of the most significant advances in IT operations. Organizations that adopt AI-powered log analysis resolve incidents faster, catch security threats earlier, and spend less time on manual log review.

Take Control of Your Log Data With Motadata

Motadata's AI-native log management platform brings centralized logging, intelligent correlation, and automated anomaly detection together in a single solution. Ingest logs from any source -- servers, network devices, cloud workloads, and applications -- and let machine learning surface the insights that matter. With built-in compliance reporting, customizable dashboards, and real-time alerting, Motadata helps IT teams move from reactive log searching to proactive operational intelligence. Start with a free trial and see how Motadata transforms your log data from a storage burden into your most valuable troubleshooting asset.

FAQs

What is centralized logging?

Centralized logging is the practice of collecting log data from all infrastructure components -- servers, applications, network devices, and cloud services -- into a unified repository. This approach enables faster troubleshooting, streamlined compliance reporting, and cross-system correlation that siloed logging can't deliver.

Why is centralized logging considered a big data challenge?

Modern IT environments generate enormous volumes of log data daily. The challenge isn't just storing this data -- it's parsing, indexing, and analyzing it fast enough to support real-time troubleshooting and security monitoring. Without the right architecture and tooling, log volumes overwhelm storage budgets and analysis capabilities.

What compliance standards require centralized logging?

Multiple frameworks mandate or strongly recommend centralized log management, including PCI DSS, HIPAA, SOX, FISMA, GLBA, and ISO 27001/27002. Each specifies requirements for log collection scope, retention duration, access controls, and review frequency.

How does AI improve centralized log management?

AI-powered log analysis automates anomaly detection, root cause correlation, and predictive alerting. Instead of relying on manual keyword searches, machine learning models baseline normal behavior and flag deviations automatically. This approach catches issues faster, reduces alert noise, and identifies patterns that human analysts might miss.

What should I look for in a centralized logging tool?

Prioritize scalable ingestion, support for structured and unstructured log formats, real-time search and correlation, customizable alerting, compliance-ready retention policies, and role-based access controls. AI-powered analysis capabilities and integration with your existing monitoring stack are also key differentiators.