Leading vs Lagging Indicators: What’s The Difference?

You’re Measuring the Wrong Things If You’re Only Looking Backward

If your IT team’s dashboards are filled with resolved incident counts, monthly uptime percentages, and closed ticket tallies, you’re not managing performance, you’re reviewing history. These are all leading vs lagging indicators in action, and most IT teams over-index heavily on one type.

The cost is real. When you only track what already happened, outages still occur, SLA breaches still surprise you, and your team spends its time fighting fires instead of preventing them. Reactive IT operations aren’t caused by a lack of data; instead, they are caused when the wrong data is reviewed very late.

Smart performance measurement requires two lenses simultaneously: one that confirms what happened, and one that signals what’s about to happen. In IT operations and ITSM, those two lenses are lagging indicators and leading indicators.

What Are Indicators? (And Why They Matter in IT)

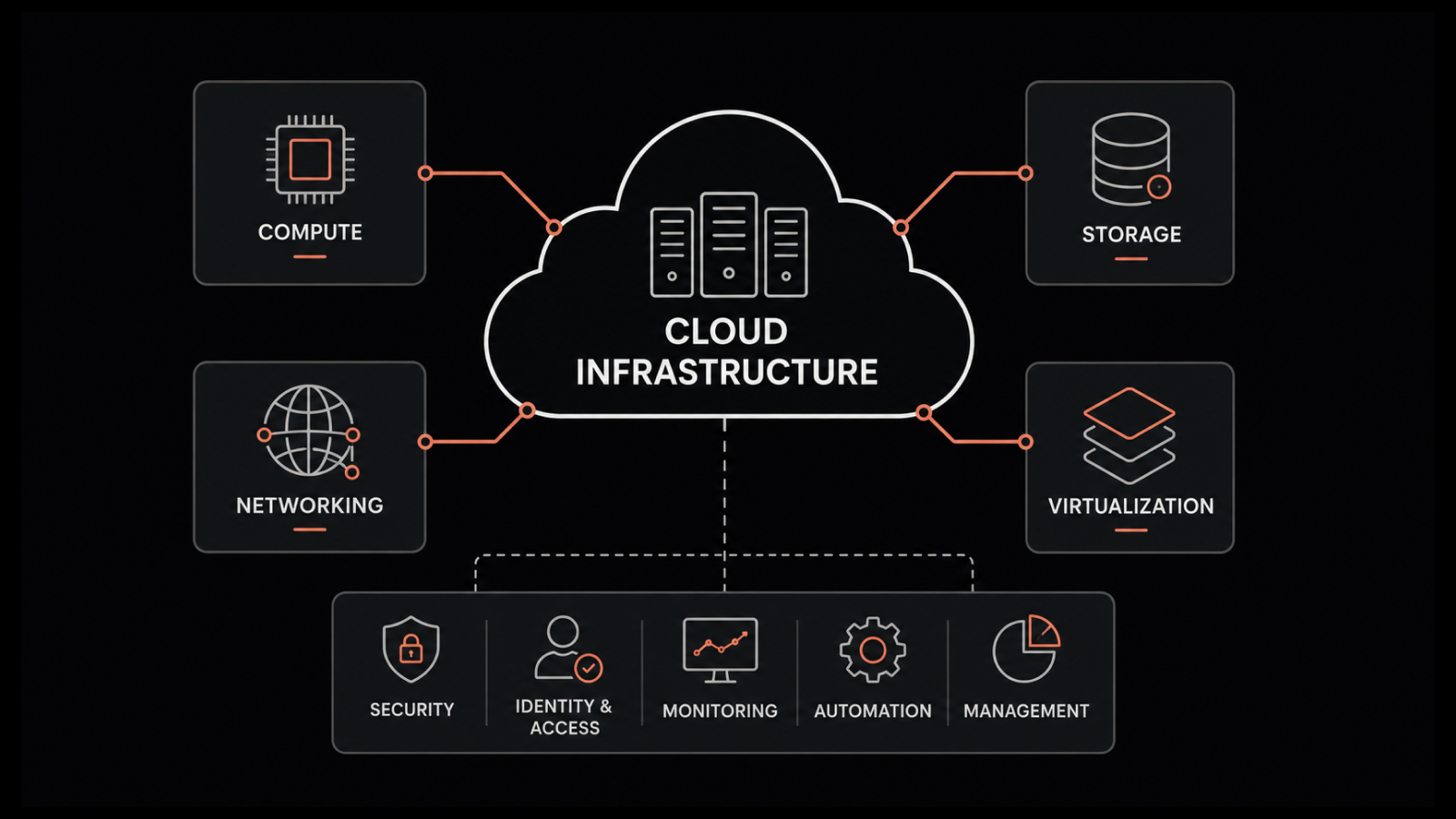

At their core, indicators are measurable data points used to evaluate performance and guide decisions. In IT operations, they help teams assess infrastructure health, service quality, team efficiency, and risk exposure. They’re the language of IT performance metrics.

Most practitioners know about KPI metrics, but only a handful of them know they not all the KPIs are recognized in the same manner. There are actually three distinct types of performance indicators, each serving a different function:

Leading indicators: Forward-looking and predictive, they signal what is likely to happen

Lagging indicators: Backward-looking and confirmatory, they measure what already occurred

Coincident indicators: Present-state metrics, they reflect what is happening right now

Each type fills a different gap. Relying exclusively on any one type creates blind spots. Teams that only track lagging indicators are perpetually in reactive mode. Teams that only monitor leading indicators may act on signals that don’t materialize. Teams that ignore coincident indicators lose situational awareness during active incidents, which can lead to delayed responses and ineffective decision-making in critical situations. A complete IT performance management program uses all three.

What Are Lagging Indicators?

Lagging indicators are backward-looking metrics that confirm what has already happened. They measure the results of processes that have already been completed.

By definition, lagging indicators are high-certainty but low-influence. By the time the number appears on your dashboard, the outcome is already set. You can’t change last month’s MTTR or last quarter’s failure rate. What you can do is learn from them.

Strengths of Lagging Indicators

Clear, objective, and verifiable, the data is unambiguous

Reliable for benchmarking and trend analysis over time

Essential for stakeholder reporting, SLA reviews, and compliance documentation

Provide ground truth for validating whether interventions actually worked

Weaknesses of Lagging Indicators

They cannot warn you of what’s coming

Overreliance breeds complacency and a reactive IT culture

Often reviewed too infrequently (monthly or quarterly) to drive timely action

IT-Specific Examples of Lagging Indicators

Total incidents resolved in a period

SLA compliance rate (% of tickets resolved within SLA)

System uptime/availability percentage over the past month

Mean Time to Resolve (MTTR) for past incidents

Number of change management failures in the previous quarter

Customer satisfaction (CSAT) scores from closed tickets

Average first response time over the last 30 days

These ITSM metrics are valuable but they’re the output of everything else your team did. They tell you how well you performed, not how well you’re about to perform.

What Are Leading Indicators?

Leading indicators are forward-looking, predictive metrics that signal what is likely to happen. Rather than measuring outcomes, they measure the actions and conditions that tend to precede specific results.

Where lagging indicators are high-certainty, leading indicators are high-influenced. You can act on them before an outcome materializes. That’s the entire point of proactive vs. reactive monitoring: shifting your attention from what already happened to what’s about to happen.

Strengths of Leading Indicators

Enable proactive intervention before outcomes become problems

Support real-time course correction and early warning before outages or SLA breaches

Teams managed on leading indicators tend to be more proactive

Weaknesses of Leading Indicators

There might be a chance that false predictive of the eventual outcome is produced

The user must confirm correlation with actual outcomes before relying on them

May require more sophisticated tooling for anomaly detection, trend analysis, and threshold alerting

The Key Distinction

Leading indicators measure what you do. Lagging indicators measure what resulted. CPU utilization trending upward is a leading indicator; last month’s server downtime incidents is the lagging one.

IT-Specific Examples of Leading Indicators

CPU and memory utilization trend (rising toward threshold = imminent performance issue)

Open incidents not updated in the last 48 hours (signal of backlog and SLA risk)

Number of unresolved change requests pending CAB review

Agent response time trend (slowing response = capacity or workload strain)

Ticket volume growth rate week-over-week (signals incoming staffing pressure)

Infrastructure anomaly detection alerts (early warning before failure)

Percentage of tickets reopened (indicates resolution quality issues before CSAT drops)

Disk usage growth rate / days-to-full forecast

These IT monitoring metrics are actionable in real time. When your anomaly detection fires on a storage array at 2pm Tuesday, you have a window to intervene before the failure occurs at 2am Wednesday.

Leading vs Lagging Indicators: Key Differences at a Glance

The core insight is simple: leading indicators are inputs; lagging indicators are outputs. You need both. The wrong question is “which is better?” The right question is how to use them together effectively.

Think of it as the windshield vs. rear-view mirror analogy: you need both to drive safely, but you spend most of your time looking forward. Lagging indicators are essential for learning and reporting. Leading indicators are essential for preventing.

Dimension | Leading Indicators | Lagging Indicators |

|---|---|---|

Timing | Future-facing / predictive | Past-facing / confirmatory |

Purpose | Signal what might happen | Confirm what did happen |

Actionability | High — you can intervene before the outcome | Low — the outcome is already set |

Certainty | Lower (predictive, not guaranteed) | Higher (based on actual results) |

Focus | Inputs, behaviors, early signals | Outputs, results, outcomes |

Review Frequency | Continuous / real-time | Periodic (weekly, monthly, quarterly) |

IT Example | Rising CPU utilization trend | Last month’s uptime percentage |

Coincident Indicators: The Third Type Most IT Teams Overlook

There’s a third category that most IT teams either ignore or lump into one of the other two: coincident indicators. These metrics measure the current state of performance, the state that is happening right now.

In IT operations, coincident indicators are the live dashboards and real-time KPIs that show instantaneous system and service health.

IT Examples of Coincident Indicators

Live CPU and memory load across infrastructure

Active incident queue size at this moment

Current network throughput and latency

Live application response time

Coincident indicators sit between leading and lagging in the performance measurement ecosystem. They confirm that a predicted trend (leading) is materializing, and provide real-time context for whether a past outcome (lagging) is about to repeat. When your leading indicators fire an alert, coincident indicators tell you whether the situation is already developing. When your lagging indicators show a degraded past period, coincident indicators tell you whether the problem has been resolved or is still active.

Together, all three types: leading, coincident, and lagging, give your IT team a complete performance picture: where you’ve been, where you are, and where you’re heading.

How to Use Leading and Lagging Indicators Together in IT Operations

Understanding the difference between leading and lagging indicators is step one. Using them together systematically is where most IT teams get stuck. Here’s a practical five-step framework.

Start With Your Lagging Indicators

Identify the outcomes like uptime, MTTR, SLA compliance rate, CSAT scores what you care about the most. They define what “good” looks like and give you the endpoints you’re trying to improve. Every leading indicator you choose should connect back to one of these outcomes.

Work Backward to Find Leading Indicators

For each lagging indicator, ask: what behaviors or signals, if monitored in advance, would predict whether this outcome is trending positively or negatively? If MTTR is your north star, what do incidents that resolve quickly have in common? What conditions preceded the slow-resolving ones? Those conditions are your leading indicators.

Validate the Correlation

Not every candidate leading indicator actually predicts the lagging outcome you care about. Track both over time and confirm that your leading indicators actually precede changes in your lagging ones. If a metric you thought was predictive doesn’t correlate with outcomes, discard it. Only validated correlations earn a permanent place in your KPI metrics framework.

Build Alert Thresholds on Leading Indicators

Manually reviewing leading metrics defeats the purpose. Set automated alerts that trigger when leading indicators cross defined thresholds. This is what transforms a leading indicator from a dashboard stat into an early warning system. It’s the foundation of proactive vs reactive monitoring in practice.

Periodically Review Lagging Indicators to Recalibrate

A monthly or quarterly review of lagging indicators validates whether your leading indicators are working and whether your interventions are having impact. If MTTR is improving, your leading indicator monitoring is probably doing its job. If it isn’t, either your leading indicators aren’t actually predictive, or your response protocols need adjustment.

Real IT Example: MTTR as the North Star

If Mean Time to resolve is your lagging north star, then “open incidents not updated in 48 hours” and “average first response time by team” are strong leading indicators to monitor and alert on. When incidents stagnate without updates, MTTR spikes are almost always coming. Alert on stagnation at 24 hours and you have time to intervene before the SLA breach occurs.

Leading and Lagging Indicators in ITSM: Service Desk Examples

ITSM teams are particularly prone to over-indexing on lagging indicators: ticket resolution counts, SLA compliance percentages, CSAT scores. These are all outcomes. They tell you last month’s story. The shift to balanced measurement means pairing each lagging ITSM metric with a corresponding leading indicator that lets you act before the outcome is determined.

Lagging Indicator (Outcome) | Paired Leading Indicator (Early Signal) |

|---|---|

MTTR (resolution time) | Tickets not updated in 48hrs / first response time trend |

SLA compliance % | SLA breach risk score / tickets approaching SLA deadline |

CSAT score | Ticket reopening rate / escalation rate trend |

Change failure rate | Non reviewed change requests / CAB backlog size |

Incident volume trend | Alert noise rate / number of anomaly detections |

Agent utilization rate | Queue depth per agent / response time by team member |

Common Mistakes Teams Make With Leading and Lagging Indicators

Even teams that understand the theory often stumble in practice. Here are the five most common failure modes in balanced indicator programs.

Tracking Too Many Indicators

More metrics isn’t better. A KPI that can’t drive a defined action is noise. Before adding any metric to your dashboard, ask: if this indicator moves, what do we do? If you can’t answer that question, the metric isn’t ready to be tracked.

Choosing the Wrong Leading Indicators

Not all leading metrics actually correlate with your lagging outcomes. Intuition is a starting point, not a validation. Track both the candidate leading indicator and the lagging outcome over time and confirm the correlation with data before committing to the KPI. Discard indicators that don’t hold up.

Reviewing Leading Indicators Too Infrequently

Leading indicators only deliver value if they’re reviewed in time to act. A monthly review of a metric that moves daily defeats the purpose entirely. CPU utilization trends and ticket queue health need to be reviewed continuously or at minimum daily. If your review cadence is slower than your intervention window, the leading indicator is functionally useless.

Ignoring Lagging Indicators in Favor of “Being Proactive”

The opposite failure: abandoning lagging indicators entirely because they’re “backward-looking.” Lagging indicators validate whether your leading indicators are actually predictive analytics. They’re your feedback loop. Without them, you have no way to know whether your interventions are working or whether your leading indicators are meaningful.

No Alert Thresholds on Leading Indicators

Manually checking leading metrics is error-prone and time-consuming. Automated alerting is what transforms a leading indicator from a dashboard stat into an early warning system. If your CPU utilization leading indicator requires someone to log into a dashboard and notice the trend, you’ve already reduced its effectiveness by 80%. Intelligent alerting closes the loop automatically.

How Motadata Helps You Track Both Leading and Lagging Indicators

Understanding the theory of leading and lagging indicators is only valuable if your tooling operationalizes it. Motadata’s platform is designed to support all three indicator types preeminently in a single integrated environment.

For Lagging Indicators

Motadata’s out-of-box reporting gives IT teams clear visibility into past outcomes at device, service, and team level. Availability reports, performance reports, and SLA compliance dashboards surface the lagging ITSM metrics and infrastructure outcomes that stakeholders and IT leaders need for reviews, benchmarking, and planning. Incident management reporting provides MTTR, resolution rate, and ticket trend analysis across defined periods.

For Leading Indicators

Motadata’s anomaly detection and predictive analysis capabilities surface early signals before outcomes materialize. The platform alerts teams to CPU utilization trends approaching thresholds, disk exhaustion timelines based on growth rate forecasting, and incident backlog risk from stagnating ticket queues. Real-time monitoring and threshold alerts ensure that leading indicator signals translate into timely notifications — not just dashboard stats someone has to remember to check.

For Coincident Indicators

Real-time monitoring dashboards across network, server, storage, application, and service layers give live, instantaneous visibility alongside trend data. Network activity monitoring, server performance monitoring, and application health dashboards provide the present-state picture that connects leading signal to lagging outcome.

Closing the Loop

The real advantage of Motadata integrated platform is that leading, coincident, and lagging indicators live in the same environment. There’s no context-switching between a monitoring tool, a service desk, and a reporting platform to correlate a CPU anomaly with an incident trend and an SLA compliance rate. The signals and the outcomes live together, which is what makes a balanced metrics program actually work in practice.

Conclusion

Lagging indicators confirm where you’ve been. Leading indicators predict where you’re going. Coincident indicators tell you where you are right now.

The shift from reactive to proactive IT operations starts with changing what you measure and when you measure it. A balanced metrics program, with clearly paired leading and lagging indicators, validated correlations, and automated alerting on the leading one, is what separates IT teams that firefight from IT teams that prevent fires.

The teams consistently ahead of outages, ahead of SLA breaches, and ahead of capacity crunches aren’t working harder than their peers. They’re measuring smarter. They’ve built systems where the right signals reach the right people in time to act, not in time to explain.

FAQs

1. What are leading and lagging indicators?

Leading indicators are predictive metrics that signal potential future outcomes, while lagging indicators measure results that have already occurred. In IT operations, leading indicators help teams prevent incidents, while lagging indicators confirm past performance.

2. What are leading and lagging indicators?

Leading indicators are predictive metrics that signal potential future outcomes, while lagging indicators measure results that have already occurred. In IT operations, leading indicators help teams prevent incidents, while lagging indicators confirm past performance.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.