5 IT Service Metrics Your Board Actually Cares About (With Formulas)

IT leaders walk into board meetings armed with uptime percentages, ticket resolution counts, and mean time to repair -- and watch executives' eyes glaze over. The numbers are accurate. They're also irrelevant to the people hearing them. Your board doesn't think in CPU utilization or ticket volumes. They think in revenue impact, risk exposure, and return on investment. If you want IT to be treated as a strategic function, you need to speak the board's language.

Business-aligned IT metrics are service performance indicators translated into financial impact, risk mitigation, and operational value. Unlike traditional technical metrics, they connect IT operational data to business outcomes -- answering the executive question "are we getting value for our IT investment?" with quantifiable evidence.

The Executive Disconnect

In many organizations, IT leaders proudly present reports showing 99.9% uptime, ticket resolution counts, or mean time to repair. These numbers often draw blank stares from executives.

The reason is straightforward: the board doesn't think in technical metrics. It thinks in financial impact, risk mitigation, and business growth. For the modern CIO or IT service manager, it's no longer enough to report operational activity. The conversation needs to shift from "what IT did" to "what IT delivered."

Uptime is now table stakes. True business resilience, cost efficiency, and user experience are what actually matter.

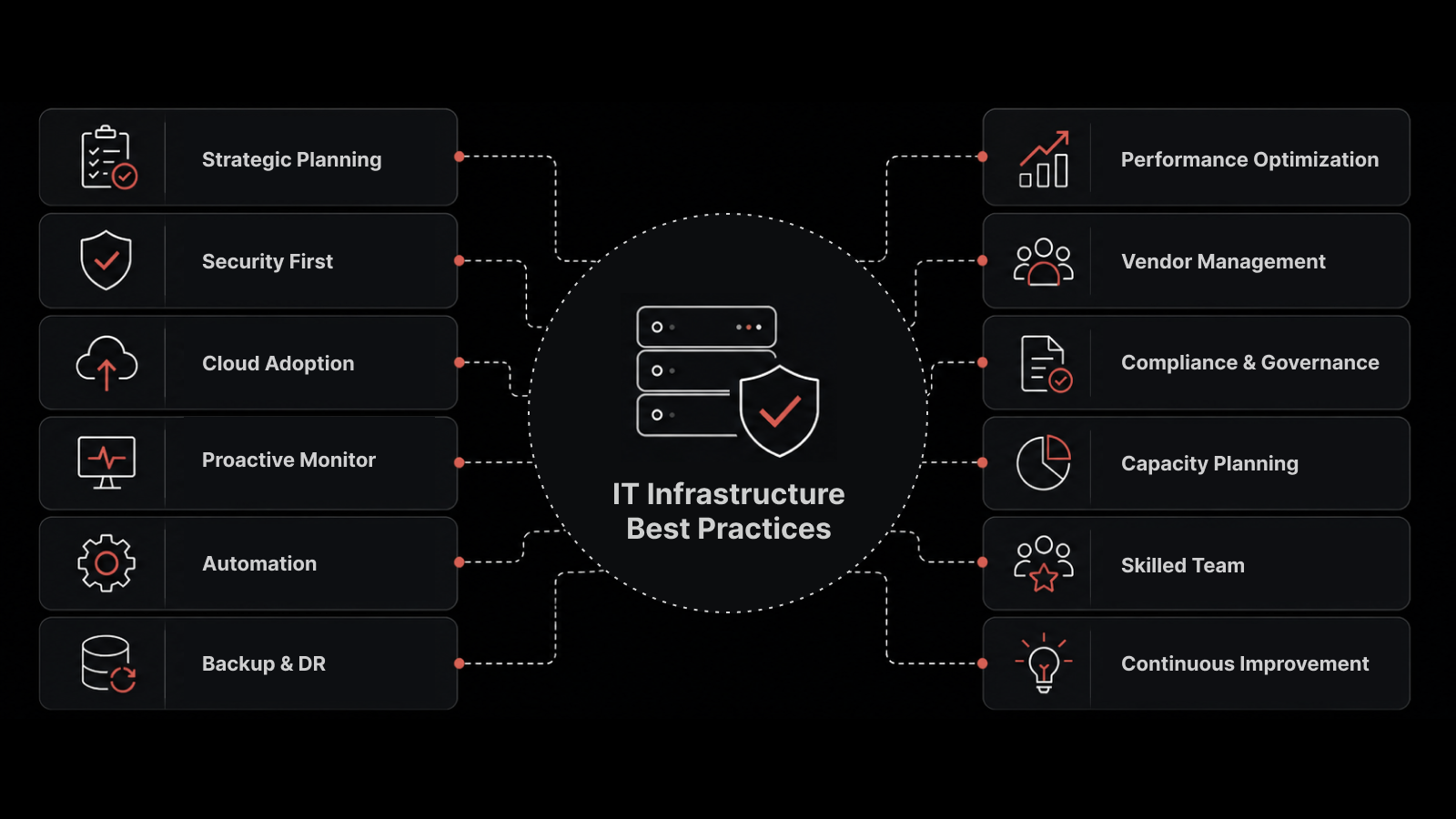

Why You Need the Right ITSM Foundation First

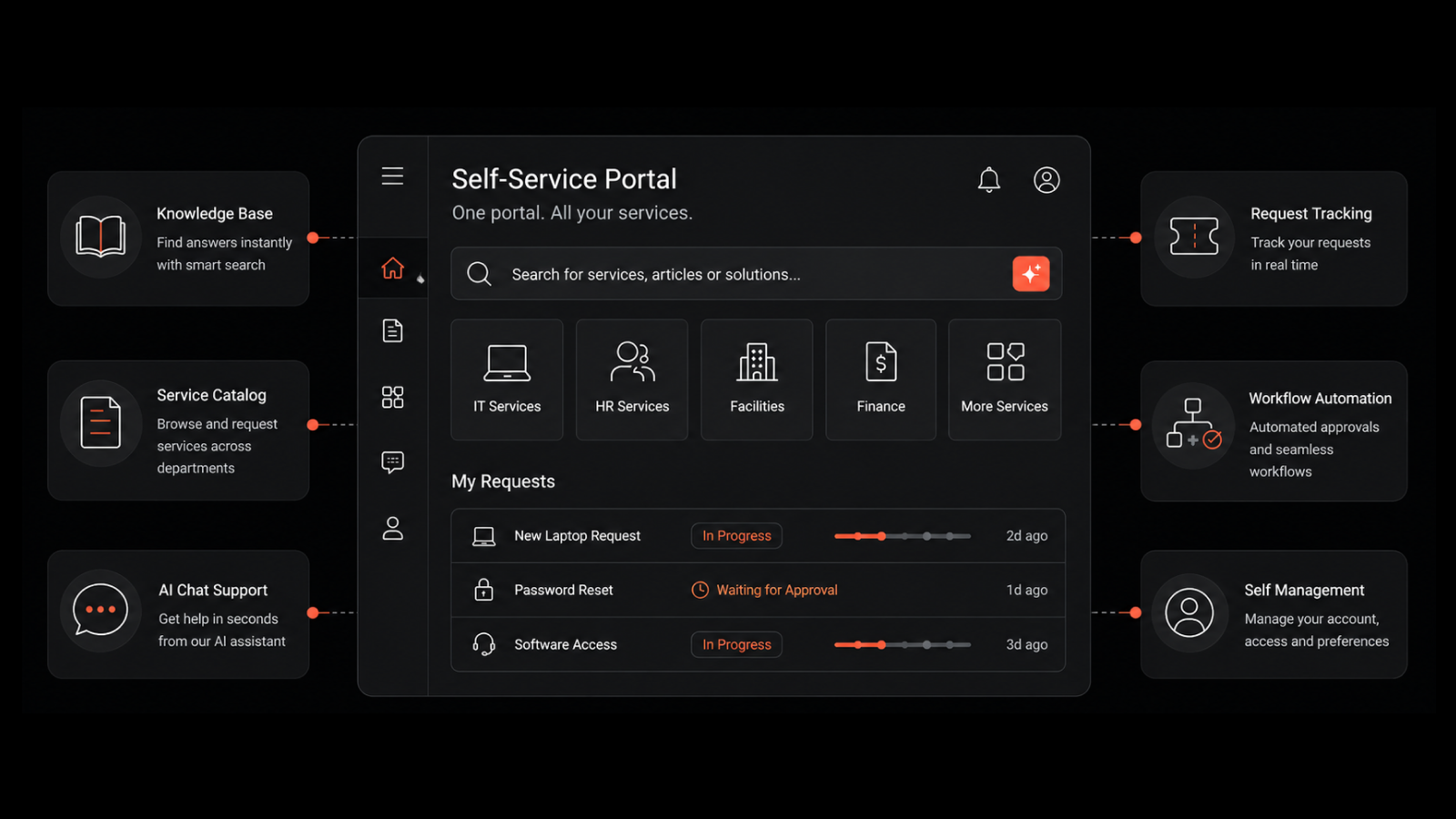

Before IT can report meaningful business metrics, it needs the right data infrastructure. That's where IT Service Management (ITSM) comes in.

A structured ITSM approach -- supported by a capable ITSM platform -- enables organizations to collect accurate, standardized data across incident management, change management, and knowledge management processes. Without clearly defined workflows and data integrity, metrics like cost per incident or change success rate are guesswork.

With an ITSM platform in place, every interaction, approval, and resolution can be tracked, measured, and correlated to business outcomes. Modern ITSM software does more than handle service desk operations -- it provides the analytical backbone for reporting that connects performance with strategy.

The 5 Metrics Your Board Needs to See

1. Cost Per Resolved Incident (CPI)

Formula: Total Service Desk Cost (salaries + tools + licenses + overhead) / Number of Incidents Resolved

Why the board cares: CPI is a direct indicator of operational efficiency. A declining CPI means your investments in automation, knowledge management, or self-service portals are paying off. A rising CPI signals inefficiencies or underutilized resources.

Executives connect with this metric because it translates process improvement into financial ROI. Implementing an automated password reset workflow could reduce manual tickets by 20%, saving thousands in staff hours.

Industry benchmark: Average CPI ranges from $15-25 for self-service resolution to $40-75 for service desk-assisted resolution, depending on organization size and complexity.

How to present it:

"By automating low-level requests through self-service, we reduced the average cost per incident by 15%, saving $25,000 last quarter."

2. Business Service Availability (BSA)

Formula: (Total Available Minutes - Unplanned Downtime Minutes) / Total Available Minutes x 100

Why the board cares: Unlike server uptime, which focuses on infrastructure, BSA measures the availability of business-critical functions -- "Order Processing System," "Claims Portal," or "Learning Management System." If your order processing system goes down, it isn't just an IT issue -- it's a revenue-impacting event.

BSA reframes technical metrics in business terms and aligns IT's operational focus with business continuity goals.

Industry benchmark: Mission-critical services typically target 99.5-99.9% BSA. Internal productivity tools often target 99.0-99.5%.

How to present it:

"The Student Enrollment Portal maintained 99.2% availability this quarter, exceeding the SLA target of 98.5%."

3. User Productivity Loss (UPL)

Formula: Average Employee Hourly Wage x Number of Affected Users x Average Resolution Time (hours)

Why the board cares: This metric converts downtime into monetary impact. For executives, seeing that an outage cost $80,000 in lost productivity is far more compelling than hearing "the system was down for two hours."

UPL emphasizes the financial consequences of slow resolutions, poor change planning, or recurring issues. It also validates investments in proactive maintenance, automation, and better resource allocation.

Industry benchmark: Average UPL per major incident ranges from $10,000-$100,000+ depending on organization size and the criticality of affected services.

How to present it:

"By improving First Call Resolution from 60% to 75%, we saved an estimated 320 employee hours, equivalent to $45,000 in regained productivity."

4. Change Success Rate (CSR)

Formula: (Successful Changes / Total Scheduled Changes) x 100

Why the board cares: CSR reflects organizational stability and risk management maturity. A high CSR demonstrates a reliable change management process that supports continuous improvement without jeopardizing operations.

In industries like finance, government, or healthcare -- where compliance and uptime are non-negotiable -- this metric reassures the board that IT can deliver innovation safely.

Industry benchmark: Top-performing organizations achieve 95%+ CSR. Below 90% signals process gaps that increase operational risk.

How to present it:

"Out of 200 changes last quarter, 192 were successful -- a 96% Change Success Rate reflecting strong governance and reduced operational risk."

5. User Satisfaction Score (CSAT/NPS)

Formula: (Number of Positive Ratings / Total Survey Responses) x 100, or standard NPS calculation

Why the board cares: High satisfaction scores mean employees view IT as an enabler rather than an obstacle. Low scores lead to "shadow IT" adoption -- where employees bypass official tools -- creating security and compliance risks.

Strong CSAT correlates with higher productivity and employee retention, especially in digital workplaces that rely heavily on seamless IT support.

Industry benchmark: Service desk CSAT scores typically range from 75-90%. Scores below 70% indicate systemic issues requiring investigation.

How to present it:

"User satisfaction improved from 78% to 87% following the introduction of the new ticket prioritization system."

Quick-Reference: Board Metrics at a Glance

Metric | Formula | Benchmark | Board Story |

|---|---|---|---|

CPI | Total Cost / Incidents Resolved | $15-75 per incident | Cost efficiency and automation ROI |

BSA | (Available - Downtime) / Available x 100 | 99.0-99.9% | Business continuity and risk |

UPL | Wage x Users x Resolution Hours | $10K-$100K+ per major incident | Financial impact of downtime |

CSR | Successful / Total Changes x 100 | 95%+ target | Operational stability and governance |

CSAT | Positive / Total Ratings x 100 | 75-90% | Employee experience and shadow IT risk |

From Data to Narrative: Presenting to the Board

Numbers alone won't win executive support -- it's the story behind them that matters.

Use trend visuals, not tables. Executives don't want spreadsheets. Visual dashboards showing trends, directional arrows, or financial equivalence communicate value immediately. A downward CPI trend paired with an "annual savings" estimate resonates far more than a data table.

Apply the "So What?" principle. Every data point should be followed by a statement of business relevance:

"Service downtime reduced by 20%." -- So what? -- "This saved approximately $60,000 in lost productivity and improved customer response time by 15%."

This approach translates ITSM reporting into executive storytelling -- connecting IT outcomes directly to strategic goals like cost optimization and risk reduction.

End with a call to action. Each quarterly report should close with actionable recommendations:

Expanding automation to reduce CPI further

Investing in predictive analytics to prevent incidents

Enhancing the self-service knowledge base to boost first-call resolution

This shows IT isn't just measuring performance -- it's continuously improving based on insights.

Building Your Board Report: A Practical Framework

Monthly internal review: Track all five metrics internally. Identify trends, investigate anomalies, and prepare narratives for anything that moved significantly.

Quarterly board presentation: Select the 2-3 metrics most relevant to current business priorities. Lead with the financial narrative, show the trend, and close with a recommendation.

Annual strategic review: Present year-over-year trajectories for all five metrics. Connect improvements to specific ITSM investments and use the data to justify next year's budget requests.

Why Motadata ServiceOps Powers Board-Ready Reporting

Motadata ServiceOps gives you the data foundation and analytics capabilities to calculate, track, and present all five board-level metrics from a single platform:

Built-in analytics dashboards with trend visualization and export-ready report formats

Automated SLA tracking that feeds directly into BSA calculations

AI-powered ticket management that improves CPI by routing, categorizing, and resolving tickets with less manual effort

Change management workflows with success rate tracking and audit trails

Integrated satisfaction surveys triggered automatically at ticket resolution

Custom reporting engine that lets you build board-specific views without SQL or developer involvement

FAQs

What IT metrics should I present to the board?

Focus on metrics that translate IT performance into business impact: Cost Per Resolved Incident (CPI) for operational efficiency, Business Service Availability (BSA) for risk and continuity, User Productivity Loss (UPL) for financial impact of downtime, Change Success Rate (CSR) for governance maturity, and User Satisfaction (CSAT) for employee experience. Avoid raw technical metrics like ticket counts or server uptime percentages.

How do I calculate cost per incident for my service desk?

Add up all service desk costs -- staff salaries, tool licenses, overhead, and training -- for a given period. Divide by the total number of incidents resolved in that period. Track this quarterly to identify trends. A declining CPI indicates your automation and self-service investments are delivering returns.

Why doesn't uptime impress the board?

Uptime is a binary, infrastructure-level metric that doesn't communicate business impact. Saying "we achieved 99.9% uptime" doesn't tell the board which business services were affected during the 0.1% downtime, what it cost, or how it compared to competitors. Business Service Availability (BSA) tied to specific revenue-generating applications is far more meaningful.

How often should IT report metrics to the board?

Most organizations report quarterly, aligned with financial reporting cycles. Monthly internal reviews keep the data current and help identify trends. Annual strategic reviews connect metric improvements to IT investments and support budget planning. The frequency matters less than the consistency -- boards want to see trajectory over time.

What's the difference between CSAT and NPS for IT service measurement?

CSAT measures satisfaction with a specific interaction (typically collected after ticket resolution). NPS measures overall loyalty and likelihood to recommend the IT function. For board reporting, CSAT is more actionable because it connects directly to service desk performance. NPS provides a broader sentiment gauge useful for annual reviews.

Author

Arpit Sharma

Senior Content Marketer

Arpit Sharma is a Senior Content Marketer at Motadata with over 8 years of experience in content writing. Specializing in telecom, fintech, AIOps, and ServiceOps, Arpit crafts insightful and engaging content that resonates with industry professionals. Beyond his professional expertise, he is an avid reader, enjoys running, and loves exploring new places.