Log Management Policies: 6 Rules to Optimize Costs, Compliance, and Performance

Amartya Gupta

Log management policies are the formalized rules and guidelines that govern how an organization collects, stores, indexes, accesses, and retains log data across its IT infrastructure. These policies ensure that log files deliver operational and security value without creating unsustainable costs or compliance gaps.

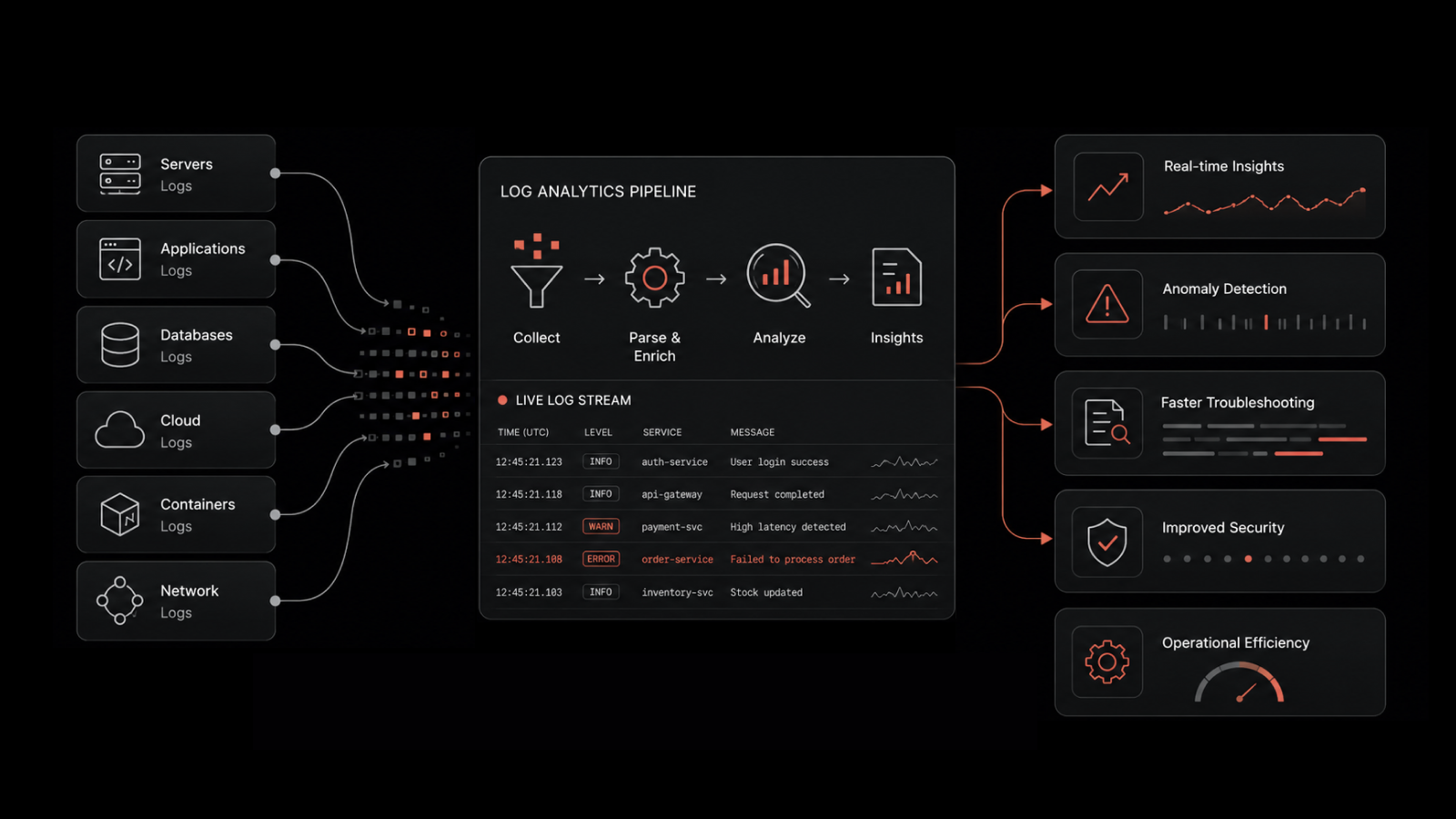

Your infrastructure generates millions of log entries every day. Servers, firewalls, applications, cloud platforms, and network devices all produce time-stamped records of what's happening across your environment. Without clear log management policies, you're either drowning in data you can't use or missing critical signals that could've prevented the next outage. The difference between teams that extract real value from logs and those that just accumulate storage costs comes down to having the right policies in place.

Why Log Management Policies Matter

Across IT infrastructure, log files are time-stamped records that capture critical information about events occurring within your network, operating systems, and software applications. Some logs are human-readable; others are designed for machine consumption. They're categorized into audit logs, transaction logs, error logs, event logs, message logs, and more.

Here's the problem: log files can shrink your mean time to resolution and make root cause analysis more efficient -- but only if you've got the right policies governing them. Without policies, costs scale unpredictably. Networks that process high volumes of log data can consume a disproportionate share of your budget, and throughput inconsistency makes those costs hard to forecast.

Even after incurring the costs, you might end up with log files that carry value only in very specific, narrow use cases. That's why a rule-based approach to log management isn't a luxury -- it's a necessity.

What Makes Log Management Policies Effective

Thoughtful log management policies address two critical dimensions simultaneously:

Security and vulnerability management. Policies help filter and flag vulnerabilities across connected devices, cloud platforms, and distributed systems before they escalate. When you've got clear rules about what gets logged, where it's stored, and who can access it, your security posture improves dramatically.

Network performance and reliability. Policies sharpen your network's performance by ensuring consistent uptime and giving teams the data they need to troubleshoot quickly.

To build effective log management policies, your team needs to answer four foundational questions:

Storage optimization -- What storage approach provides the right balance between resource consumption and value?

Retention rules -- How long should log files live across different storage tiers with varying authorization, security, and accessibility requirements?

Conditional collection -- Are there log categories only needed in specific situations that shouldn't be collected by default?

Sampling strategies -- Which log file categories are homogeneous enough that a representative sample delivers the same value as the full data set?

Policy 1: Centralize Log Collection With Log Forwarders

A log forwarder should be the central unit of your log management system. Centralized log management gives your team greater control, easier accessibility, and the perspective to optimize log files according to your allocated budget.

Here's how it works in practice:

Enterprise applications produce log files on local systems

The forwarder extends those log files to a central location for analytics

Compression happens at the forwarder level, freeing up space for your core applications to run undisturbed

The forwarder can resend files, acting as a built-in backup mechanism

This architecture also reduces your dependency on system uptime for log delivery. If a system goes down and restarts, the forwarder automatically sends the queued files. You don't lose critical log data just because a server had a bad day.

Policy 2: Define Access Controls and Notification Rules

Most log files fall into two categories of logging processes -- one responsible for sending entries and another responsible for pulling entries out. Your policies need to address both sides.

On the sending side, establish clear guidance on logging levels. What level of detail is appropriate for each use case? Sending too much information creates noisy data and wastes resources. Sending too little leaves dangerous gaps.

On the receiving side, system security and IT operations take priority. Sensitive data in log files can be exploited if authorization isn't tightly controlled.

Your notification policy should answer one question clearly: who gets notified about what, when, and how? Without this clarity, either critical alerts get buried in noise or the wrong people receive sensitive operational data.

Policy 3: Ensure Compliance in Log Data Collection

Your log data collection practices must satisfy the guidelines established under regulatory frameworks including:

PCI DSS - Payment Card Industry Data Security Standard

FISMA -Federal Information Security Modernization Act

SOX - Sarbanes-Oxley Act

HIPAA - Health Insurance Portability and Accountability Act

And any other policies relevant to your specific industry and business requirements. Compliance isn't just about collecting the right data - it's about being able to demonstrate compliance through reports that are easy to generate on demand.

Teams that treat compliance as an afterthought end up scrambling during audits. Teams with compliance baked into their log management policies produce required reports as a routine byproduct of their daily operations.

Policy 4: Invest in Scalable Log Data Storage

During ingestion, it's often inefficient to filter log data too early. At the point of collection, there are rarely clear indicators showing which log files will prove valuable for future IT challenges.

Your platform should handle this by allowing you to store terabytes of log data daily without bottlenecks. Once you've got the data centralized, you can create indexing rules that prioritize log files aligned with your most important use cases.

This approach flips the traditional model: instead of guessing what to keep at ingestion time, you store everything and apply intelligence after the fact.

Policy 5: Build Adaptive Indexing Policies

If your system is dynamic and exposed to varied risks, your log files shouldn't be indexed statically. Static indexing creates friction exactly when you need speed most.

When your system throws an error or you're facing a critical challenge, you shouldn't be fighting through server-side filtering policies to find what you need. Your platform should let you override indexing policies on the fly, saving significant time when an urgent issue surfaces.

Adaptive indexing means your log management system works the way your team actually operates -- adjusting priorities based on the situation rather than forcing teams to work within rigid, predefined rules.

Policy 6: Use a Unified Platform for Collection, Indexing, and Analytics

The most effective log management policies are only as good as the platform that enforces them. A fragmented toolchain creates blind spots, delays, and compliance gaps.

Motadata's AI-native log management platform collects, aggregates, and intelligently indexes all log data regardless of format -- structured or unstructured, human-readable or machine-generated. Here's what that means in practice:

Correlation analysis powered by rich data analytics capabilities helps you efficiently identify operational and security risks across your entire environment

Network Flow Analytics monitors all traffic between connected devices supporting Netflow V5 & V9, sFlow, IPFIX, and other protocols

Traffic intelligence reveals bottlenecks based on trends in traffic patterns and critical interactions between users or applications

Built-in compliance with PCI DSS, FISMA, HIPAA, and other regulatory standards

With comprehensive data collection, intelligent indexing, and a fluid search interface, your IT teams can systematically optimize log file practices while maintaining the highest standards of compliance and operational efficiency.

How Motadata Helps You Build Stronger Log Management

Motadata's AI-native Log Management Platform brings collection, aggregation, indexing, and analytics together on a single platform. Instead of stitching together point solutions and hoping the policies hold, you get a unified environment where every log management policy -- from retention to access control to compliance -- operates within one cohesive system.

Whether you're managing thousands of log sources across hybrid infrastructure or need to demonstrate compliance during your next audit, Motadata gives you the visibility and control to do it without the complexity.

Start your free trial and see how centralized, AI-native log management transforms your operational efficiency.

FAQs

What are log management policies?

Log management policies are formalized rules that govern how an organization collects, stores, indexes, accesses, and retains log data. They ensure log files deliver security and operational value while controlling costs and meeting compliance requirements.

Why is centralized log management important?

Centralized log management gives IT teams a single point of control for all log data across the infrastructure. It simplifies access, enables cross-source correlation analysis, supports compliance reporting, and provides built-in resilience through log forwarders that queue and resend data after system failures.

What compliance standards require log management?

Major compliance frameworks including PCI DSS, HIPAA, SOX, and FISMA all require specific log collection, retention, and reporting practices. Organizations in regulated industries need log management policies that satisfy these standards and produce audit-ready reports on demand.

How do adaptive indexing policies work?

Adaptive indexing lets teams override static log indexing rules based on the current situation. During normal operations, standard indexing priorities apply. When emergencies arise, teams can instantly re-prioritize which logs get indexed first, saving critical time during troubleshooting.

What's the difference between log data and metric data?

Log data consists of time-stamped text records describing events in detail, while metric data consists of numeric measurements tracking system performance over time. Both are essential for comprehensive IT monitoring -- logs provide context for debugging, while metrics provide trends for performance analysis.